first commit

This commit is contained in:

commit

6d6c4736b0

|

|

@ -0,0 +1,14 @@

|

|||

Copyright (C) 2025 AIDC-AI

|

||||

Licensed under the Apache License, Version 2.0 (the "License");

|

||||

you may not use this file except in compliance with the License.

|

||||

You may obtain a copy of the License at

|

||||

http://www.apache.org/licenses/LICENSE-2.0

|

||||

Unless required by applicable law or agreed to in writing, software

|

||||

distributed under the License is distributed on an "AS IS" BASIS,

|

||||

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

||||

See the License for the specific language governing permissions and

|

||||

limitations under the License.

|

||||

|

||||

This model was trained based on the following models:

|

||||

1. Qwen2.5 (https://huggingface.co/Qwen/Qwen2.5-3B-Instruct), license:(https://huggingface.co/Qwen/Qwen2.5-3B-Instruct/blob/main/LICENSE, SPDX-License-identifier: Apache-2.0).

|

||||

2. AimV2 (https://huggingface.co/apple/aimv2-huge-patch14-448), license: Apple-Sample-Code-License (https://developer.apple.com/support/downloads/terms/apple-sample-code/Apple-Sample-Code-License.pdf)

|

||||

|

|

@ -0,0 +1,258 @@

|

|||

---

|

||||

license: apache-2.0

|

||||

datasets:

|

||||

- AIDC-AI/Ovis-dataset

|

||||

library_name: transformers

|

||||

tags:

|

||||

- MLLM

|

||||

pipeline_tag: image-text-to-text

|

||||

language:

|

||||

- en

|

||||

- zh

|

||||

---

|

||||

|

||||

# Ovis2-4B

|

||||

<div align="center">

|

||||

<img src=https://cdn-uploads.huggingface.co/production/uploads/637aebed7ce76c3b834cea37/3IK823BZ8w-mz_QfeYkDn.png width="30%"/>

|

||||

</div>

|

||||

|

||||

## Introduction

|

||||

[GitHub](https://github.com/AIDC-AI/Ovis) | [Paper](https://arxiv.org/abs/2405.20797)

|

||||

|

||||

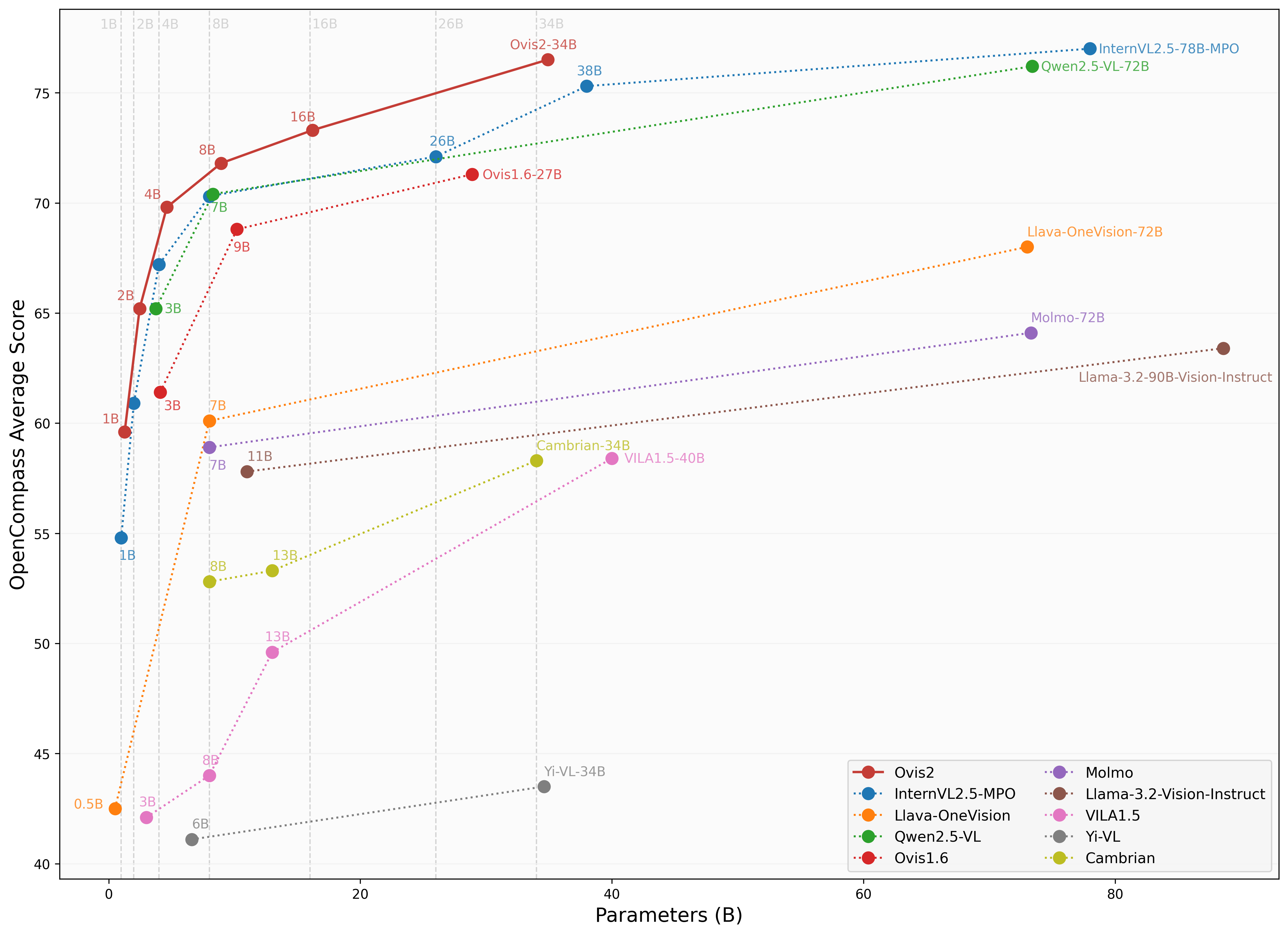

We are pleased to announce the release of **Ovis2**, our latest advancement in multi-modal large language models (MLLMs). Ovis2 inherits the innovative architectural design of the Ovis series, aimed at structurally aligning visual and textual embeddings. As the successor to Ovis1.6, Ovis2 incorporates significant improvements in both dataset curation and training methodologies.

|

||||

|

||||

**Key Features**:

|

||||

|

||||

- **Small Model Performance**: Optimized training strategies enable small-scale models to achieve higher capability density, demonstrating cross-tier leading advantages.

|

||||

|

||||

- **Enhanced Reasoning Capabilities**: Significantly strengthens Chain-of-Thought (CoT) reasoning abilities through the combination of instruction tuning and preference learning.

|

||||

|

||||

- **Video and Multi-Image Processing**: Video and multi-image data are incorporated into training to enhance the ability to handle complex visual information across frames and images.

|

||||

|

||||

- **Multilingual Support and OCR**: Enhances multilingual OCR beyond English and Chinese and improves structured data extraction from complex visual elements like tables and charts.

|

||||

|

||||

<div align="center">

|

||||

<img src="https://cdn-uploads.huggingface.co/production/uploads/637aebed7ce76c3b834cea37/XB-vgzDL6FshrSNGyZvzc.png" width="100%" />

|

||||

</div>

|

||||

|

||||

## Model Zoo

|

||||

|

||||

| Ovis MLLMs | ViT | LLM | Model Weights | Demo |

|

||||

|:-----------|:-----------------------:|:---------------------:|:-------------------------------------------------------:|:--------------------------------------------------------:|

|

||||

| Ovis2-1B | aimv2-large-patch14-448 | Qwen2.5-0.5B-Instruct | [Huggingface](https://huggingface.co/AIDC-AI/Ovis2-1B) | [Space](https://huggingface.co/spaces/AIDC-AI/Ovis2-1B) |

|

||||

| Ovis2-2B | aimv2-large-patch14-448 | Qwen2.5-1.5B-Instruct | [Huggingface](https://huggingface.co/AIDC-AI/Ovis2-2B) | [Space](https://huggingface.co/spaces/AIDC-AI/Ovis2-2B) |

|

||||

| Ovis2-4B | aimv2-huge-patch14-448 | Qwen2.5-3B-Instruct | [Huggingface](https://huggingface.co/AIDC-AI/Ovis2-4B) | [Space](https://huggingface.co/spaces/AIDC-AI/Ovis2-4B) |

|

||||

| Ovis2-8B | aimv2-huge-patch14-448 | Qwen2.5-7B-Instruct | [Huggingface](https://huggingface.co/AIDC-AI/Ovis2-8B) | [Space](https://huggingface.co/spaces/AIDC-AI/Ovis2-8B) |

|

||||

| Ovis2-16B | aimv2-huge-patch14-448 | Qwen2.5-14B-Instruct | [Huggingface](https://huggingface.co/AIDC-AI/Ovis2-16B) | [Space](https://huggingface.co/spaces/AIDC-AI/Ovis2-16B) |

|

||||

| Ovis2-34B | aimv2-1B-patch14-448 | Qwen2.5-32B-Instruct | [Huggingface](https://huggingface.co/AIDC-AI/Ovis2-34B) | - |

|

||||

|

||||

## Performance

|

||||

We use [VLMEvalKit](https://github.com/open-compass/VLMEvalKit), as employed in the OpenCompass [multimodal](https://rank.opencompass.org.cn/leaderboard-multimodal) and [reasoning](https://rank.opencompass.org.cn/leaderboard-multimodal-reasoning) leaderboard, to evaluate Ovis2.

|

||||

|

||||

|

||||

|

||||

### Image Benchmark

|

||||

| Benchmark | Qwen2.5-VL-7B | InternVL2.5-8B-MPO | MiniCPM-o-2.6 | Ovis1.6-9B | InternVL2.5-4B-MPO | Ovis2-4B | Ovis2-8B |

|

||||

|:-----------------------------|:---------------:|:--------------------:|:---------------:|:------------:|:--------------------:|:----------:|:----------:|

|

||||

| MMBench-V1.1<sub>test</sub> | 82.6 | 82.0 | 80.6 | 80.5 | 77.8 | 81.4 | **83.6** |

|

||||

| MMStar | 64.1 | **65.2** | 63.3 | 62.9 | 61 | 61.9 | 64.6 |

|

||||

| MMMU<sub>val</sub> | 56.2 | 54.8 | 50.9 | 55 | 51.8 | 49.0 | **57.4** |

|

||||

| MathVista<sub>testmini</sub> | 65.8 | 67.9 | **73.3** | 67.3 | 64.1 | 69.6 | 71.8 |

|

||||

| HallusionBench | **56.3** | 51.7 | 51.1 | 52.2 | 47.5 | 53.8 | **56.3** |

|

||||

| AI2D | 84.1 | 84.5 | 86.1 | 84.4 | 81.5 | 85.7 | **86.6** |

|

||||

| OCRBench | 87.7 | 88.2 | 88.9 | 83 | 87.9 | **91.1** | 89.1 |

|

||||

| MMVet | 66.6 | **68.1** | 67.2 | 65 | 66 | 65.5 | 65.1 |

|

||||

| MMBench<sub>test</sub> | 83.4 | 83.2 | 83.2 | 82.7 | 79.6 | 83.2 | **84.9** |

|

||||

| MMT-Bench<sub>val</sub> | 62.7 | 62.5 | 62.3 | 64.9 | 61.6 | 65.2 | **66.6** |

|

||||

| RealWorldQA | 68.8 | 71.1 | 68.0 | 70.7 | 64.4 | 71.1 | **72.5** |

|

||||

| BLINK | 56.1 | **56.6** | 53.9 | 48.5 | 50.6 | 53.0 | 54.3 |

|

||||

| QBench | 77.9 | 73.8 | 78.7 | 76.7 | 71.5 | 78.1 | **78.9** |

|

||||

| ABench | 75.6 | 77.0 | **77.5** | 74.4 | 75.9 | **77.5** | 76.4 |

|

||||

| MTVQA | 28.5 | 27.2 | 23.1 | 19.2 | 28 | 29.4 | **29.7** |

|

||||

|

||||

### Video Benchmark

|

||||

| Benchmark | Qwen2.5-VL-7B | InternVL2.5-8B | LLaVA-OV-7B | InternVL2.5-4B | Ovis2-4B | Ovis2-8B |

|

||||

|:--------------------|:-------------:|:--------------:|:------------------:|:--------------:|:---------:|:-------------:|

|

||||

| VideoMME(wo/w-subs) | 65.1/71.6 | 64.2 / 66.9 | 58.2/61.5 | 62.3 / 63.6 | 64.0/66.3 | **68.0/71.6** |

|

||||

| MVBench | 69.6 | **72.0** | 56.7 | 71.6 | 68.45 | 68.15 |

|

||||

| MLVU(M-Avg/G-Avg) | 70.2/- | 68.9/- | 64.7/- | 68.3/- | 70.8/4.23 | **76.4**/4.25 |

|

||||

| MMBench-Video | 1.79 | 1.68 | - | 1.73 | 1.69 | **1.85** |

|

||||

| TempCompass | **71.7** | - | - | - | 67.02 | 69.28 |

|

||||

|

||||

## Usage

|

||||

Below is a code snippet demonstrating how to run Ovis with various input types. For additional usage instructions, including inference wrapper and Gradio UI, please refer to [Ovis GitHub](https://github.com/AIDC-AI/Ovis?tab=readme-ov-file#inference).

|

||||

```bash

|

||||

pip install torch==2.4.0 transformers==4.46.2 numpy==1.25.0 pillow==10.3.0

|

||||

pip install flash-attn==2.7.0.post2 --no-build-isolation

|

||||

```

|

||||

```python

|

||||

import torch

|

||||

from PIL import Image

|

||||

from transformers import AutoModelForCausalLM

|

||||

|

||||

# load model

|

||||

model = AutoModelForCausalLM.from_pretrained("AIDC-AI/Ovis2-4B",

|

||||

torch_dtype=torch.bfloat16,

|

||||

multimodal_max_length=32768,

|

||||

trust_remote_code=True).cuda()

|

||||

text_tokenizer = model.get_text_tokenizer()

|

||||

visual_tokenizer = model.get_visual_tokenizer()

|

||||

|

||||

# single-image input

|

||||

image_path = '/data/images/example_1.jpg'

|

||||

images = [Image.open(image_path)]

|

||||

max_partition = 9

|

||||

text = 'Describe the image.'

|

||||

query = f'<image>\n{text}'

|

||||

|

||||

## cot-style input

|

||||

# cot_suffix = "Provide a step-by-step solution to the problem, and conclude with 'the answer is' followed by the final solution."

|

||||

# image_path = '/data/images/example_1.jpg'

|

||||

# images = [Image.open(image_path)]

|

||||

# max_partition = 9

|

||||

# text = "What's the area of the shape?"

|

||||

# query = f'<image>\n{text}\n{cot_suffix}'

|

||||

|

||||

## multiple-images input

|

||||

# image_paths = [

|

||||

# '/data/images/example_1.jpg',

|

||||

# '/data/images/example_2.jpg',

|

||||

# '/data/images/example_3.jpg'

|

||||

# ]

|

||||

# images = [Image.open(image_path) for image_path in image_paths]

|

||||

# max_partition = 4

|

||||

# text = 'Describe each image.'

|

||||

# query = '\n'.join([f'Image {i+1}: <image>' for i in range(len(images))]) + '\n' + text

|

||||

|

||||

## video input (require `pip install moviepy==1.0.3`)

|

||||

# from moviepy.editor import VideoFileClip

|

||||

# video_path = '/data/videos/example_1.mp4'

|

||||

# num_frames = 12

|

||||

# max_partition = 1

|

||||

# text = 'Describe the video.'

|

||||

# with VideoFileClip(video_path) as clip:

|

||||

# total_frames = int(clip.fps * clip.duration)

|

||||

# if total_frames <= num_frames:

|

||||

# sampled_indices = range(total_frames)

|

||||

# else:

|

||||

# stride = total_frames / num_frames

|

||||

# sampled_indices = [min(total_frames - 1, int((stride * i + stride * (i + 1)) / 2)) for i in range(num_frames)]

|

||||

# frames = [clip.get_frame(index / clip.fps) for index in sampled_indices]

|

||||

# frames = [Image.fromarray(frame, mode='RGB') for frame in frames]

|

||||

# images = frames

|

||||

# query = '\n'.join(['<image>'] * len(images)) + '\n' + text

|

||||

|

||||

## text-only input

|

||||

# images = []

|

||||

# max_partition = None

|

||||

# text = 'Hello'

|

||||

# query = text

|

||||

|

||||

# format conversation

|

||||

prompt, input_ids, pixel_values = model.preprocess_inputs(query, images, max_partition=max_partition)

|

||||

attention_mask = torch.ne(input_ids, text_tokenizer.pad_token_id)

|

||||

input_ids = input_ids.unsqueeze(0).to(device=model.device)

|

||||

attention_mask = attention_mask.unsqueeze(0).to(device=model.device)

|

||||

if pixel_values is not None:

|

||||

pixel_values = pixel_values.to(dtype=visual_tokenizer.dtype, device=visual_tokenizer.device)

|

||||

pixel_values = [pixel_values]

|

||||

|

||||

# generate output

|

||||

with torch.inference_mode():

|

||||

gen_kwargs = dict(

|

||||

max_new_tokens=1024,

|

||||

do_sample=False,

|

||||

top_p=None,

|

||||

top_k=None,

|

||||

temperature=None,

|

||||

repetition_penalty=None,

|

||||

eos_token_id=model.generation_config.eos_token_id,

|

||||

pad_token_id=text_tokenizer.pad_token_id,

|

||||

use_cache=True

|

||||

)

|

||||

output_ids = model.generate(input_ids, pixel_values=pixel_values, attention_mask=attention_mask, **gen_kwargs)[0]

|

||||

output = text_tokenizer.decode(output_ids, skip_special_tokens=True)

|

||||

print(f'Output:\n{output}')

|

||||

```

|

||||

|

||||

<details>

|

||||

<summary>Batch Inference</summary>

|

||||

|

||||

```python

|

||||

import torch

|

||||

from PIL import Image

|

||||

from transformers import AutoModelForCausalLM

|

||||

|

||||

# load model

|

||||

model = AutoModelForCausalLM.from_pretrained("AIDC-AI/Ovis2-4B",

|

||||

torch_dtype=torch.bfloat16,

|

||||

multimodal_max_length=32768,

|

||||

trust_remote_code=True).cuda()

|

||||

text_tokenizer = model.get_text_tokenizer()

|

||||

visual_tokenizer = model.get_visual_tokenizer()

|

||||

|

||||

# preprocess inputs

|

||||

batch_inputs = [

|

||||

('/data/images/example_1.jpg', 'What colors dominate the image?'),

|

||||

('/data/images/example_2.jpg', 'What objects are depicted in this image?'),

|

||||

('/data/images/example_3.jpg', 'Is there any text in the image?')

|

||||

]

|

||||

|

||||

batch_input_ids = []

|

||||

batch_attention_mask = []

|

||||

batch_pixel_values = []

|

||||

|

||||

for image_path, text in batch_inputs:

|

||||

image = Image.open(image_path)

|

||||

query = f'<image>\n{text}'

|

||||

prompt, input_ids, pixel_values = model.preprocess_inputs(query, [image], max_partition=9)

|

||||

attention_mask = torch.ne(input_ids, text_tokenizer.pad_token_id)

|

||||

batch_input_ids.append(input_ids.to(device=model.device))

|

||||

batch_attention_mask.append(attention_mask.to(device=model.device))

|

||||

batch_pixel_values.append(pixel_values.to(dtype=visual_tokenizer.dtype, device=visual_tokenizer.device))

|

||||

|

||||

batch_input_ids = torch.nn.utils.rnn.pad_sequence([i.flip(dims=[0]) for i in batch_input_ids], batch_first=True,

|

||||

padding_value=0.0).flip(dims=[1])

|

||||

batch_input_ids = batch_input_ids[:, -model.config.multimodal_max_length:]

|

||||

batch_attention_mask = torch.nn.utils.rnn.pad_sequence([i.flip(dims=[0]) for i in batch_attention_mask],

|

||||

batch_first=True, padding_value=False).flip(dims=[1])

|

||||

batch_attention_mask = batch_attention_mask[:, -model.config.multimodal_max_length:]

|

||||

|

||||

# generate outputs

|

||||

with torch.inference_mode():

|

||||

gen_kwargs = dict(

|

||||

max_new_tokens=1024,

|

||||

do_sample=False,

|

||||

top_p=None,

|

||||

top_k=None,

|

||||

temperature=None,

|

||||

repetition_penalty=None,

|

||||

eos_token_id=model.generation_config.eos_token_id,

|

||||

pad_token_id=text_tokenizer.pad_token_id,

|

||||

use_cache=True

|

||||

)

|

||||

output_ids = model.generate(batch_input_ids, pixel_values=batch_pixel_values, attention_mask=batch_attention_mask,

|

||||

**gen_kwargs)

|

||||

|

||||

for i in range(len(batch_inputs)):

|

||||

output = text_tokenizer.decode(output_ids[i], skip_special_tokens=True)

|

||||

print(f'Output {i + 1}:\n{output}\n')

|

||||

```

|

||||

</details>

|

||||

|

||||

## Citation

|

||||

If you find Ovis useful, please consider citing the paper

|

||||

```

|

||||

@article{lu2024ovis,

|

||||

title={Ovis: Structural Embedding Alignment for Multimodal Large Language Model},

|

||||

author={Shiyin Lu and Yang Li and Qing-Guo Chen and Zhao Xu and Weihua Luo and Kaifu Zhang and Han-Jia Ye},

|

||||

year={2024},

|

||||

journal={arXiv:2405.20797}

|

||||

}

|

||||

```

|

||||

|

||||

## License

|

||||

This project is licensed under the [Apache License, Version 2.0](https://www.apache.org/licenses/LICENSE-2.0.txt) (SPDX-License-Identifier: Apache-2.0).

|

||||

|

||||

## Disclaimer

|

||||

We used compliance-checking algorithms during the training process, to ensure the compliance of the trained model to the best of our ability. Due to the complexity of the data and the diversity of language model usage scenarios, we cannot guarantee that the model is completely free of copyright issues or improper content. If you believe anything infringes on your rights or generates improper content, please contact us, and we will promptly address the matter.

|

||||

|

|

@ -0,0 +1,24 @@

|

|||

{

|

||||

"</tool_call>": 151658,

|

||||

"<tool_call>": 151657,

|

||||

"<|box_end|>": 151649,

|

||||

"<|box_start|>": 151648,

|

||||

"<|endoftext|>": 151643,

|

||||

"<|file_sep|>": 151664,

|

||||

"<|fim_middle|>": 151660,

|

||||

"<|fim_pad|>": 151662,

|

||||

"<|fim_prefix|>": 151659,

|

||||

"<|fim_suffix|>": 151661,

|

||||

"<|im_end|>": 151645,

|

||||

"<|im_start|>": 151644,

|

||||

"<|image_pad|>": 151655,

|

||||

"<|object_ref_end|>": 151647,

|

||||

"<|object_ref_start|>": 151646,

|

||||

"<|quad_end|>": 151651,

|

||||

"<|quad_start|>": 151650,

|

||||

"<|repo_name|>": 151663,

|

||||

"<|video_pad|>": 151656,

|

||||

"<|vision_end|>": 151653,

|

||||

"<|vision_pad|>": 151654,

|

||||

"<|vision_start|>": 151652

|

||||

}

|

||||

|

|

@ -0,0 +1,256 @@

|

|||

{

|

||||

"architectures": [

|

||||

"Ovis"

|

||||

],

|

||||

"auto_map": {

|

||||

"AutoConfig": "configuration_ovis.OvisConfig",

|

||||

"AutoModelForCausalLM": "modeling_ovis.Ovis"

|

||||

},

|

||||

"conversation_formatter_class": "QwenConversationFormatter",

|

||||

"disable_tie_weight": false,

|

||||

"hidden_size": 2048,

|

||||

"llm_attn_implementation": "flash_attention_2",

|

||||

"llm_config": {

|

||||

"_attn_implementation_autoset": true,

|

||||

"_name_or_path": "Qwen/Qwen2.5-3B-Instruct",

|

||||

"add_cross_attention": false,

|

||||

"architectures": [

|

||||

"Qwen2ForCausalLM"

|

||||

],

|

||||

"attention_dropout": 0.0,

|

||||

"bad_words_ids": null,

|

||||

"begin_suppress_tokens": null,

|

||||

"bos_token_id": 151643,

|

||||

"chunk_size_feed_forward": 0,

|

||||

"cross_attention_hidden_size": null,

|

||||

"decoder_start_token_id": null,

|

||||

"diversity_penalty": 0.0,

|

||||

"do_sample": false,

|

||||

"early_stopping": false,

|

||||

"encoder_no_repeat_ngram_size": 0,

|

||||

"eos_token_id": 151645,

|

||||

"exponential_decay_length_penalty": null,

|

||||

"finetuning_task": null,

|

||||

"forced_bos_token_id": null,

|

||||

"forced_eos_token_id": null,

|

||||

"hidden_act": "silu",

|

||||

"hidden_size": 2048,

|

||||

"id2label": {

|

||||

"0": "LABEL_0",

|

||||

"1": "LABEL_1"

|

||||

},

|

||||

"initializer_range": 0.02,

|

||||

"intermediate_size": 11008,

|

||||

"is_decoder": false,

|

||||

"is_encoder_decoder": false,

|

||||

"label2id": {

|

||||

"LABEL_0": 0,

|

||||

"LABEL_1": 1

|

||||

},

|

||||

"length_penalty": 1.0,

|

||||

"max_length": 20,

|

||||

"max_position_embeddings": 32768,

|

||||

"max_window_layers": 70,

|

||||

"min_length": 0,

|

||||

"model_type": "qwen2",

|

||||

"no_repeat_ngram_size": 0,

|

||||

"num_attention_heads": 16,

|

||||

"num_beam_groups": 1,

|

||||

"num_beams": 1,

|

||||

"num_hidden_layers": 36,

|

||||

"num_key_value_heads": 2,

|

||||

"num_return_sequences": 1,

|

||||

"output_attentions": false,

|

||||

"output_hidden_states": false,

|

||||

"output_scores": false,

|

||||

"pad_token_id": null,

|

||||

"prefix": null,

|

||||

"problem_type": null,

|

||||

"pruned_heads": {},

|

||||

"remove_invalid_values": false,

|

||||

"repetition_penalty": 1.0,

|

||||

"return_dict": true,

|

||||

"return_dict_in_generate": false,

|

||||

"rms_norm_eps": 1e-06,

|

||||

"rope_scaling": null,

|

||||

"rope_theta": 1000000.0,

|

||||

"sep_token_id": null,

|

||||

"sliding_window": null,

|

||||

"suppress_tokens": null,

|

||||

"task_specific_params": null,

|

||||

"temperature": 1.0,

|

||||

"tf_legacy_loss": false,

|

||||

"tie_encoder_decoder": false,

|

||||

"tie_word_embeddings": true,

|

||||

"tokenizer_class": null,

|

||||

"top_k": 50,

|

||||

"top_p": 1.0,

|

||||

"torch_dtype": "bfloat16",

|

||||

"torchscript": false,

|

||||

"typical_p": 1.0,

|

||||

"use_bfloat16": false,

|

||||

"use_cache": true,

|

||||

"use_sliding_window": false,

|

||||

"vocab_size": 151936

|

||||

},

|

||||

"model_type": "ovis",

|

||||

"multimodal_max_length": 32768,

|

||||

"torch_dtype": "bfloat16",

|

||||

"transformers_version": "4.46.2",

|

||||

"use_cache": true,

|

||||

"visual_tokenizer_config": {

|

||||

"_attn_implementation_autoset": true,

|

||||

"_name_or_path": "",

|

||||

"add_cross_attention": false,

|

||||

"architectures": null,

|

||||

"backbone_config": {

|

||||

"_attn_implementation_autoset": true,

|

||||

"_name_or_path": "apple/aimv2-huge-patch14-448",

|

||||

"add_cross_attention": false,

|

||||

"architectures": [

|

||||

"AIMv2Model"

|

||||

],

|

||||

"attention_dropout": 0.0,

|

||||

"auto_map": {

|

||||

"AutoConfig": "configuration_aimv2.AIMv2Config",

|

||||

"AutoModel": "modeling_aimv2.AIMv2Model",

|

||||

"FlaxAutoModel": "modeling_flax_aimv2.FlaxAIMv2Model"

|

||||

},

|

||||

"bad_words_ids": null,

|

||||

"begin_suppress_tokens": null,

|

||||

"bos_token_id": null,

|

||||

"chunk_size_feed_forward": 0,

|

||||

"cross_attention_hidden_size": null,

|

||||

"decoder_start_token_id": null,

|

||||

"diversity_penalty": 0.0,

|

||||

"do_sample": false,

|

||||

"early_stopping": false,

|

||||

"encoder_no_repeat_ngram_size": 0,

|

||||

"eos_token_id": null,

|

||||

"exponential_decay_length_penalty": null,

|

||||

"finetuning_task": null,

|

||||

"forced_bos_token_id": null,

|

||||

"forced_eos_token_id": null,

|

||||

"hidden_size": 1536,

|

||||

"id2label": {

|

||||

"0": "LABEL_0",

|

||||

"1": "LABEL_1"

|

||||

},

|

||||

"image_size": 448,

|

||||

"intermediate_size": 4096,

|

||||

"is_decoder": false,

|

||||

"is_encoder_decoder": false,

|

||||

"label2id": {

|

||||

"LABEL_0": 0,

|

||||

"LABEL_1": 1

|

||||

},

|

||||

"length_penalty": 1.0,

|

||||

"max_length": 20,

|

||||

"min_length": 0,

|

||||

"model_type": "aimv2",

|

||||

"no_repeat_ngram_size": 0,

|

||||

"num_attention_heads": 12,

|

||||

"num_beam_groups": 1,

|

||||

"num_beams": 1,

|

||||

"num_channels": 3,

|

||||

"num_hidden_layers": 24,

|

||||

"num_return_sequences": 1,

|

||||

"output_attentions": false,

|

||||

"output_hidden_states": false,

|

||||

"output_scores": false,

|

||||

"pad_token_id": null,

|

||||

"patch_size": 14,

|

||||

"prefix": null,

|

||||

"problem_type": null,

|

||||

"projection_dropout": 0.0,

|

||||

"pruned_heads": {},

|

||||

"qkv_bias": false,

|

||||

"remove_invalid_values": false,

|

||||

"repetition_penalty": 1.0,

|

||||

"return_dict": true,

|

||||

"return_dict_in_generate": false,

|

||||

"rms_norm_eps": 1e-05,

|

||||

"sep_token_id": null,

|

||||

"suppress_tokens": null,

|

||||

"task_specific_params": null,

|

||||

"temperature": 1.0,

|

||||

"tf_legacy_loss": false,

|

||||

"tie_encoder_decoder": false,

|

||||

"tie_word_embeddings": true,

|

||||

"tokenizer_class": null,

|

||||

"top_k": 50,

|

||||

"top_p": 1.0,

|

||||

"torch_dtype": "float32",

|

||||

"torchscript": false,

|

||||

"typical_p": 1.0,

|

||||

"use_bfloat16": false,

|

||||

"use_bias": false

|

||||

},

|

||||

"backbone_kwargs": {},

|

||||

"bad_words_ids": null,

|

||||

"begin_suppress_tokens": null,

|

||||

"bos_token_id": null,

|

||||

"chunk_size_feed_forward": 0,

|

||||

"cross_attention_hidden_size": null,

|

||||

"decoder_start_token_id": null,

|

||||

"depths": null,

|

||||

"diversity_penalty": 0.0,

|

||||

"do_sample": false,

|

||||

"drop_cls_token": false,

|

||||

"early_stopping": false,

|

||||

"encoder_no_repeat_ngram_size": 0,

|

||||

"eos_token_id": null,

|

||||

"exponential_decay_length_penalty": null,

|

||||

"finetuning_task": null,

|

||||

"forced_bos_token_id": null,

|

||||

"forced_eos_token_id": null,

|

||||

"hidden_stride": 2,

|

||||

"id2label": {

|

||||

"0": "LABEL_0",

|

||||

"1": "LABEL_1"

|

||||

},

|

||||

"is_decoder": false,

|

||||

"is_encoder_decoder": false,

|

||||

"label2id": {

|

||||

"LABEL_0": 0,

|

||||

"LABEL_1": 1

|

||||

},

|

||||

"length_penalty": 1.0,

|

||||

"max_length": 20,

|

||||

"min_length": 0,

|

||||

"model_type": "aimv2_visual_tokenizer",

|

||||

"no_repeat_ngram_size": 0,

|

||||

"num_beam_groups": 1,

|

||||

"num_beams": 1,

|

||||

"num_return_sequences": 1,

|

||||

"output_attentions": false,

|

||||

"output_hidden_states": false,

|

||||

"output_scores": false,

|

||||

"pad_token_id": null,

|

||||

"prefix": null,

|

||||

"problem_type": null,

|

||||

"pruned_heads": {},

|

||||

"remove_invalid_values": false,

|

||||

"repetition_penalty": 1.0,

|

||||

"return_dict": true,

|

||||

"return_dict_in_generate": false,

|

||||

"sep_token_id": null,

|

||||

"suppress_tokens": null,

|

||||

"task_specific_params": null,

|

||||

"tau": 1.0,

|

||||

"temperature": 1.0,

|

||||

"tf_legacy_loss": false,

|

||||

"tie_encoder_decoder": false,

|

||||

"tie_word_embeddings": true,

|

||||

"tokenize_function": "softmax",

|

||||

"tokenizer_class": null,

|

||||

"top_k": 50,

|

||||

"top_p": 1.0,

|

||||

"torch_dtype": null,

|

||||

"torchscript": false,

|

||||

"typical_p": 1.0,

|

||||

"use_bfloat16": false,

|

||||

"use_indicators": false,

|

||||

"vocab_size": 65536

|

||||

}

|

||||

}

|

||||

|

|

@ -0,0 +1,63 @@

|

|||

# copied from https://huggingface.co/apple/aimv2-huge-patch14-448

|

||||

from typing import Any

|

||||

|

||||

from transformers.configuration_utils import PretrainedConfig

|

||||

|

||||

__all__ = ["AIMv2Config"]

|

||||

|

||||

|

||||

class AIMv2Config(PretrainedConfig):

|

||||

"""This is the configuration class to store the configuration of an [`AIMv2Model`].

|

||||

|

||||

Instantiating a configuration with the defaults will yield a similar configuration

|

||||

to that of the [apple/aimv2-large-patch14-224](https://huggingface.co/apple/aimv2-large-patch14-224).

|

||||

|

||||

Args:

|

||||

hidden_size: Dimension of the hidden representations.

|

||||

intermediate_size: Dimension of the SwiGLU representations.

|

||||

num_hidden_layers: Number of hidden layers in the Transformer.

|

||||

num_attention_heads: Number of attention heads for each attention layer

|

||||

in the Transformer.

|

||||

num_channels: Number of input channels.

|

||||

image_size: Image size.

|

||||

patch_size: Patch size.

|

||||

rms_norm_eps: Epsilon value used for the RMS normalization layer.

|

||||

attention_dropout: Dropout ratio for attention probabilities.

|

||||

projection_dropout: Dropout ratio for the projection layer after the attention.

|

||||

qkv_bias: Whether to add a bias to the queries, keys and values.

|

||||

use_bias: Whether to add a bias in the feed-forward and projection layers.

|

||||

kwargs: Keyword arguments for the [`PretrainedConfig`].

|

||||

"""

|

||||

|

||||

model_type: str = "aimv2"

|

||||

|

||||

def __init__(

|

||||

self,

|

||||

hidden_size: int = 1024,

|

||||

intermediate_size: int = 2816,

|

||||

num_hidden_layers: int = 24,

|

||||

num_attention_heads: int = 8,

|

||||

num_channels: int = 3,

|

||||

image_size: int = 224,

|

||||

patch_size: int = 14,

|

||||

rms_norm_eps: float = 1e-5,

|

||||

attention_dropout: float = 0.0,

|

||||

projection_dropout: float = 0.0,

|

||||

qkv_bias: bool = False,

|

||||

use_bias: bool = False,

|

||||

**kwargs: Any,

|

||||

):

|

||||

super().__init__(**kwargs)

|

||||

self.hidden_size = hidden_size

|

||||

self.intermediate_size = intermediate_size

|

||||

self.num_hidden_layers = num_hidden_layers

|

||||

self.num_attention_heads = num_attention_heads

|

||||

self.num_channels = num_channels

|

||||

self.patch_size = patch_size

|

||||

self.image_size = image_size

|

||||

self.attention_dropout = attention_dropout

|

||||

self.rms_norm_eps = rms_norm_eps

|

||||

|

||||

self.projection_dropout = projection_dropout

|

||||

self.qkv_bias = qkv_bias

|

||||

self.use_bias = use_bias

|

||||

|

|

@ -0,0 +1,204 @@

|

|||

from abc import ABC, abstractmethod

|

||||

from typing import List, Dict, Union, Optional

|

||||

|

||||

from transformers import PretrainedConfig, AutoConfig, AutoModel

|

||||

from .configuration_aimv2 import AIMv2Config

|

||||

from .modeling_aimv2 import AIMv2Model

|

||||

|

||||

IGNORE_ID = -100

|

||||

IMAGE_TOKEN_ID = -200

|

||||

IMAGE_TOKEN = "<image>"

|

||||

IMAGE_ATOM_ID = -300

|

||||

IMAGE_INDICATOR_IDS = [-301, -302, -303, -304, -305]

|

||||

|

||||

AutoConfig.register("aimv2", AIMv2Config)

|

||||

AutoModel.register(AIMv2Config, AIMv2Model)

|

||||

|

||||

# ----------------------------------------------------------------------

|

||||

# Visual Tokenizer Configuration

|

||||

# ----------------------------------------------------------------------

|

||||

class BaseVisualTokenizerConfig(PretrainedConfig):

|

||||

def __init__(

|

||||

self,

|

||||

vocab_size=16384,

|

||||

tokenize_function="softmax",

|

||||

tau=1.0,

|

||||

depths=None,

|

||||

drop_cls_token=False,

|

||||

backbone_config: Optional[Union[PretrainedConfig, dict]] = None,

|

||||

hidden_stride: int = 1,

|

||||

**kwargs

|

||||

):

|

||||

super().__init__(**kwargs)

|

||||

self.vocab_size = vocab_size

|

||||

self.tokenize_function = tokenize_function

|

||||

self.tau = tau

|

||||

if isinstance(depths, str):

|

||||

depths = [int(x) for x in depths.split('|')]

|

||||

self.depths = depths

|

||||

self.backbone_kwargs = {}

|

||||

self.drop_cls_token = drop_cls_token

|

||||

if backbone_config is not None:

|

||||

assert isinstance(backbone_config, (PretrainedConfig, dict)), \

|

||||

f"expect `backbone_config` to be instance of PretrainedConfig or dict, but got {type(backbone_config)} type"

|

||||

if not isinstance(backbone_config, PretrainedConfig):

|

||||

model_type = backbone_config['model_type']

|

||||

backbone_config.pop('model_type')

|

||||

backbone_config = AutoConfig.for_model(model_type, **backbone_config)

|

||||

self.backbone_config = backbone_config

|

||||

self.hidden_stride = hidden_stride

|

||||

|

||||

|

||||

class Aimv2VisualTokenizerConfig(BaseVisualTokenizerConfig):

|

||||

model_type = "aimv2_visual_tokenizer"

|

||||

|

||||

def __init__(self, **kwargs):

|

||||

super().__init__(**kwargs)

|

||||

if self.drop_cls_token:

|

||||

self.drop_cls_token = False

|

||||

if self.depths:

|

||||

assert len(self.depths) == 1

|

||||

self.backbone_kwargs['num_hidden_layers'] = self.depths[0]

|

||||

|

||||

|

||||

AutoConfig.register("aimv2_visual_tokenizer", Aimv2VisualTokenizerConfig)

|

||||

|

||||

|

||||

# ----------------------------------------------------------------------

|

||||

# Ovis Configuration

|

||||

# ----------------------------------------------------------------------

|

||||

class OvisConfig(PretrainedConfig):

|

||||

model_type = "ovis"

|

||||

|

||||

def __init__(

|

||||

self,

|

||||

llm_config: Optional[Union[PretrainedConfig, dict]] = None,

|

||||

visual_tokenizer_config: Optional[Union[PretrainedConfig, dict]] = None,

|

||||

multimodal_max_length=8192,

|

||||

hidden_size=None,

|

||||

conversation_formatter_class=None,

|

||||

llm_attn_implementation=None,

|

||||

disable_tie_weight=False,

|

||||

**kwargs

|

||||

):

|

||||

super().__init__(**kwargs)

|

||||

if llm_config is not None:

|

||||

assert isinstance(llm_config, (PretrainedConfig, dict)), \

|

||||

f"expect `llm_config` to be instance of PretrainedConfig or dict, but got {type(llm_config)} type"

|

||||

if not isinstance(llm_config, PretrainedConfig):

|

||||

model_type = llm_config['model_type']

|

||||

llm_config.pop('model_type')

|

||||

llm_config = AutoConfig.for_model(model_type, **llm_config)

|

||||

self.llm_config = llm_config

|

||||

if visual_tokenizer_config is not None:

|

||||

assert isinstance(visual_tokenizer_config, (PretrainedConfig, dict)), \

|

||||

f"expect `visual_tokenizer_config` to be instance of PretrainedConfig or dict, but got {type(visual_tokenizer_config)} type"

|

||||

if not isinstance(visual_tokenizer_config, PretrainedConfig):

|

||||

model_type = visual_tokenizer_config['model_type']

|

||||

visual_tokenizer_config.pop('model_type')

|

||||

visual_tokenizer_config = AutoConfig.for_model(model_type, **visual_tokenizer_config)

|

||||

self.visual_tokenizer_config = visual_tokenizer_config

|

||||

self.multimodal_max_length = multimodal_max_length

|

||||

self.hidden_size = hidden_size

|

||||

self.conversation_formatter_class = conversation_formatter_class

|

||||

self.llm_attn_implementation = llm_attn_implementation

|

||||

self.disable_tie_weight = disable_tie_weight

|

||||

|

||||

|

||||

# ----------------------------------------------------------------------

|

||||

# Conversation Formatter

|

||||

# ----------------------------------------------------------------------

|

||||

class ConversationFormatter(ABC):

|

||||

support_tokenizer_types = None

|

||||

|

||||

def __init__(self, tokenizer):

|

||||

tokenizer_type = type(tokenizer).__name__

|

||||

assert tokenizer_type in self.support_tokenizer_types, \

|

||||

f'Invalid tokenizer type, expected one from `{self.support_tokenizer_types}`, but got `{tokenizer_type}`'

|

||||

self.tokenizer = tokenizer

|

||||

self.image_token = IMAGE_TOKEN

|

||||

self.image_token_id = IMAGE_TOKEN_ID

|

||||

self.ignore_id = IGNORE_ID

|

||||

|

||||

def _tokenize_with_image_symbol(self, text):

|

||||

text_chunks = [self.tokenizer(chunk, add_special_tokens=False).input_ids for chunk in

|

||||

text.split(self.image_token)]

|

||||

token_ids = []

|

||||

num_chuck = len(text_chunks)

|

||||

for i, chunk in enumerate(text_chunks):

|

||||

token_ids.extend(chunk)

|

||||

if i < num_chuck - 1:

|

||||

token_ids.append(self.image_token_id)

|

||||

return token_ids

|

||||

|

||||

@abstractmethod

|

||||

def format(self, conversations: List[Dict], generation_preface=None):

|

||||

pass

|

||||

|

||||

@abstractmethod

|

||||

def format_query(self, query, generation_preface=""):

|

||||

pass

|

||||

|

||||

|

||||

class QwenConversationFormatter(ConversationFormatter):

|

||||

support_tokenizer_types = ['QWenTokenizer', 'Qwen2TokenizerFast']

|

||||

|

||||

def __init__(self, tokenizer):

|

||||

super().__init__(tokenizer)

|

||||

self.from2role = {

|

||||

"system": "<|im_start|>system\n",

|

||||

"human": "<|im_start|>user\n",

|

||||

"gpt": "<|im_start|>assistant\n",

|

||||

}

|

||||

self.gpt_token_num = None

|

||||

self.im_end = "<|im_end|>\n"

|

||||

self.default_system_prompt = "You are a helpful assistant."

|

||||

|

||||

def format(self, conversations: List[Dict], generation_preface=None):

|

||||

if self.gpt_token_num is None:

|

||||

self.gpt_token_num = len(self.tokenizer(self.from2role["gpt"], add_special_tokens=False).input_ids)

|

||||

|

||||

if conversations[0]["from"] != "system":

|

||||

conversations.insert(0, {

|

||||

"from": "system",

|

||||

"value": self.default_system_prompt

|

||||

})

|

||||

|

||||

if generation_preface is not None:

|

||||

conversations.append({

|

||||

"from": "gpt",

|

||||

"value": generation_preface

|

||||

})

|

||||

|

||||

prompt = ""

|

||||

input_ids = []

|

||||

labels = []

|

||||

num_conversation = len(conversations)

|

||||

for i, conversation in enumerate(conversations):

|

||||

frm = conversation["from"]

|

||||

role = self.from2role[frm]

|

||||

message = conversation["value"]

|

||||

text = role + message

|

||||

if i < num_conversation - 1 or generation_preface is None:

|

||||

text += self.im_end

|

||||

prompt += text

|

||||

token_ids = self._tokenize_with_image_symbol(text)

|

||||

input_ids.extend(token_ids)

|

||||

label_ids = [self.ignore_id] * len(token_ids)

|

||||

if frm == "gpt" and generation_preface is None:

|

||||

# learning `\n` following `im_end` is meaningless, so the last `\n` token is ignored in label

|

||||

label_ids[self.gpt_token_num:-1] = token_ids[self.gpt_token_num:-1]

|

||||

labels.extend(label_ids)

|

||||

|

||||

assert self._tokenize_with_image_symbol(prompt) == input_ids

|

||||

assert len(input_ids) == len(labels)

|

||||

|

||||

return prompt, input_ids, labels

|

||||

|

||||

def format_query(self, query, generation_preface=""):

|

||||

prompt, input_ids, _ = self.format([{

|

||||

"from": "human",

|

||||

"value": query

|

||||

}], generation_preface=generation_preface)

|

||||

|

||||

return prompt, input_ids

|

||||

|

|

@ -0,0 +1,15 @@

|

|||

{

|

||||

"bos_token_id": 151643,

|

||||

"do_sample": true,

|

||||

"eos_token_id": [

|

||||

151645,

|

||||

151643

|

||||

],

|

||||

"multimodal_max_length": 32768,

|

||||

"pad_token_id": 151643,

|

||||

"repetition_penalty": 1.05,

|

||||

"temperature": 0.7,

|

||||

"top_k": 20,

|

||||

"top_p": 0.8,

|

||||

"transformers_version": "4.46.2"

|

||||

}

|

||||

File diff suppressed because it is too large

Load Diff

|

|

@ -0,0 +1,619 @@

|

|||

{

|

||||

"metadata": {

|

||||

"total_size": 9232212972

|

||||

},

|

||||

"weight_map": {

|

||||

"llm.lm_head.weight": "model-00002-of-00002.safetensors",

|

||||

"llm.model.embed_tokens.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.0.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.0.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.0.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.0.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.0.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.0.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.0.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.0.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.0.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.0.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.0.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.0.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.1.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.1.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.1.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.1.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.1.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.1.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.1.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.1.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.1.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.1.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.1.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.1.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.10.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.10.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.10.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.10.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.10.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.10.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.10.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.10.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.10.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.10.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.10.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.10.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.11.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.11.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.11.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.11.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.11.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.11.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.11.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.11.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.11.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.11.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.11.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.11.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.12.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.12.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.12.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.12.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.12.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.12.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.12.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.12.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.12.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.12.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.12.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.12.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.13.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.13.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.13.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.13.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.13.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.13.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.13.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.13.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.13.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.13.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.13.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.13.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.14.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.14.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.14.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.14.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.14.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.14.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.14.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.14.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.14.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.14.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.14.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.14.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.15.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.15.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.15.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.15.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.15.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.15.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.15.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.15.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.15.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.15.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.15.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.15.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.16.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.16.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.16.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.16.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.16.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.16.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.16.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.16.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.16.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.16.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.16.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.16.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.17.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.17.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.17.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.17.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.17.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.17.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.17.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.17.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.17.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.17.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.17.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.17.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.18.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.18.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.18.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.18.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.18.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.18.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.18.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.18.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.18.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.18.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.18.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.18.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.19.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.19.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.19.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.19.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.19.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.19.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.19.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.19.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.19.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.19.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.19.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.19.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.2.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.2.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.2.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.2.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.2.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.2.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.2.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.2.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.2.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.2.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.2.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.2.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.20.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.20.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.20.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.20.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.20.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.20.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.20.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.20.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.20.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.20.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.20.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.20.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.21.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.21.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.21.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.21.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.21.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.21.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.21.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.21.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.21.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.21.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.21.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.21.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.22.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.22.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.22.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.22.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.22.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.22.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.22.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.22.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.22.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.22.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.22.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.22.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.23.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.23.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.23.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.23.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.23.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.23.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.23.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.23.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.23.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.23.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.23.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.23.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.24.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.24.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.24.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.24.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.24.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.24.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.24.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.24.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.24.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.24.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.24.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.24.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.25.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.25.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.25.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.25.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.25.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.25.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.25.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.25.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.25.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.25.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.25.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.25.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.26.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.26.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.26.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.26.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.26.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.26.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.26.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.26.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.26.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.26.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.26.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.26.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.27.input_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.27.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.27.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.27.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.27.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.27.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.27.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.27.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.27.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.27.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.27.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.27.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.28.input_layernorm.weight": "model-00002-of-00002.safetensors",

|

||||

"llm.model.layers.28.mlp.down_proj.weight": "model-00002-of-00002.safetensors",

|

||||

"llm.model.layers.28.mlp.gate_proj.weight": "model-00002-of-00002.safetensors",

|

||||

"llm.model.layers.28.mlp.up_proj.weight": "model-00002-of-00002.safetensors",

|

||||

"llm.model.layers.28.post_attention_layernorm.weight": "model-00002-of-00002.safetensors",

|

||||

"llm.model.layers.28.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.28.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.28.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.28.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

|

||||

"llm.model.layers.28.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

|

||||