forked from ailab/vit-gpt2-image-captioning

first commit

This commit is contained in:

commit

eca440e823

|

|

@ -0,0 +1,27 @@

|

|||

*.7z filter=lfs diff=lfs merge=lfs -text

|

||||

*.arrow filter=lfs diff=lfs merge=lfs -text

|

||||

*.bin filter=lfs diff=lfs merge=lfs -text

|

||||

*.bin.* filter=lfs diff=lfs merge=lfs -text

|

||||

*.bz2 filter=lfs diff=lfs merge=lfs -text

|

||||

*.ftz filter=lfs diff=lfs merge=lfs -text

|

||||

*.gz filter=lfs diff=lfs merge=lfs -text

|

||||

*.h5 filter=lfs diff=lfs merge=lfs -text

|

||||

*.joblib filter=lfs diff=lfs merge=lfs -text

|

||||

*.lfs.* filter=lfs diff=lfs merge=lfs -text

|

||||

*.model filter=lfs diff=lfs merge=lfs -text

|

||||

*.msgpack filter=lfs diff=lfs merge=lfs -text

|

||||

*.onnx filter=lfs diff=lfs merge=lfs -text

|

||||

*.ot filter=lfs diff=lfs merge=lfs -text

|

||||

*.parquet filter=lfs diff=lfs merge=lfs -text

|

||||

*.pb filter=lfs diff=lfs merge=lfs -text

|

||||

*.pt filter=lfs diff=lfs merge=lfs -text

|

||||

*.pth filter=lfs diff=lfs merge=lfs -text

|

||||

*.rar filter=lfs diff=lfs merge=lfs -text

|

||||

saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

||||

*.tar.* filter=lfs diff=lfs merge=lfs -text

|

||||

*.tflite filter=lfs diff=lfs merge=lfs -text

|

||||

*.tgz filter=lfs diff=lfs merge=lfs -text

|

||||

*.xz filter=lfs diff=lfs merge=lfs -text

|

||||

*.zip filter=lfs diff=lfs merge=lfs -text

|

||||

*.zstandard filter=lfs diff=lfs merge=lfs -text

|

||||

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

||||

|

|

@ -0,0 +1,90 @@

|

|||

---

|

||||

tags:

|

||||

- image-to-text

|

||||

- image-captioning

|

||||

license: apache-2.0

|

||||

widget:

|

||||

- src: https://huggingface.co/datasets/mishig/sample_images/resolve/main/savanna.jpg

|

||||

example_title: Savanna

|

||||

- src: https://huggingface.co/datasets/mishig/sample_images/resolve/main/football-match.jpg

|

||||

example_title: Football Match

|

||||

- src: https://huggingface.co/datasets/mishig/sample_images/resolve/main/airport.jpg

|

||||

example_title: Airport

|

||||

---

|

||||

|

||||

# nlpconnect/vit-gpt2-image-captioning

|

||||

|

||||

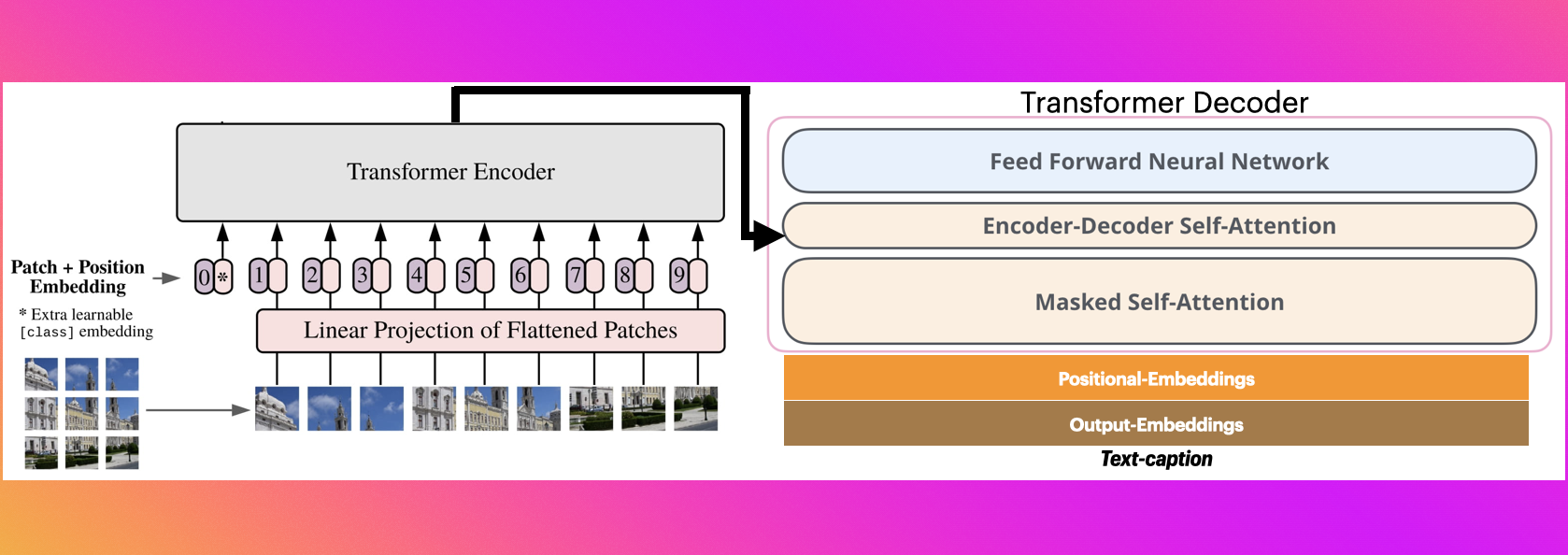

This is an image captioning model trained by @ydshieh in [flax ](https://github.com/huggingface/transformers/tree/main/examples/flax/image-captioning) this is pytorch version of [this](https://huggingface.co/ydshieh/vit-gpt2-coco-en-ckpts).

|

||||

|

||||

|

||||

# The Illustrated Image Captioning using transformers

|

||||

|

||||

|

||||

|

||||

* https://ankur3107.github.io/blogs/the-illustrated-image-captioning-using-transformers/

|

||||

|

||||

|

||||

# Sample running code

|

||||

|

||||

```python

|

||||

|

||||

from transformers import VisionEncoderDecoderModel, ViTImageProcessor, AutoTokenizer

|

||||

import torch

|

||||

from PIL import Image

|

||||

|

||||

model = VisionEncoderDecoderModel.from_pretrained("nlpconnect/vit-gpt2-image-captioning")

|

||||

feature_extractor = ViTImageProcessor.from_pretrained("nlpconnect/vit-gpt2-image-captioning")

|

||||

tokenizer = AutoTokenizer.from_pretrained("nlpconnect/vit-gpt2-image-captioning")

|

||||

|

||||

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

|

||||

model.to(device)

|

||||

|

||||

|

||||

|

||||

max_length = 16

|

||||

num_beams = 4

|

||||

gen_kwargs = {"max_length": max_length, "num_beams": num_beams}

|

||||

def predict_step(image_paths):

|

||||

images = []

|

||||

for image_path in image_paths:

|

||||

i_image = Image.open(image_path)

|

||||

if i_image.mode != "RGB":

|

||||

i_image = i_image.convert(mode="RGB")

|

||||

|

||||

images.append(i_image)

|

||||

|

||||

pixel_values = feature_extractor(images=images, return_tensors="pt").pixel_values

|

||||

pixel_values = pixel_values.to(device)

|

||||

|

||||

output_ids = model.generate(pixel_values, **gen_kwargs)

|

||||

|

||||

preds = tokenizer.batch_decode(output_ids, skip_special_tokens=True)

|

||||

preds = [pred.strip() for pred in preds]

|

||||

return preds

|

||||

|

||||

|

||||

predict_step(['doctor.e16ba4e4.jpg']) # ['a woman in a hospital bed with a woman in a hospital bed']

|

||||

|

||||

```

|

||||

|

||||

# Sample running code using transformers pipeline

|

||||

|

||||

```python

|

||||

|

||||

from transformers import pipeline

|

||||

|

||||

image_to_text = pipeline("image-to-text", model="nlpconnect/vit-gpt2-image-captioning")

|

||||

|

||||

image_to_text("https://ankur3107.github.io/assets/images/image-captioning-example.png")

|

||||

|

||||

# [{'generated_text': 'a soccer game with a player jumping to catch the ball '}]

|

||||

|

||||

|

||||

```

|

||||

|

||||

|

||||

# Contact for any help

|

||||

* https://huggingface.co/ankur310794

|

||||

* https://twitter.com/ankur310794

|

||||

* http://github.com/ankur3107

|

||||

* https://www.linkedin.com/in/ankur310794

|

||||

|

|

@ -0,0 +1,177 @@

|

|||

{

|

||||

"_name_or_path": "vit-gpt-pt",

|

||||

"architectures": [

|

||||

"VisionEncoderDecoderModel"

|

||||

],

|

||||

"bos_token_id": 50256,

|

||||

"decoder": {

|

||||

"_name_or_path": "",

|

||||

"activation_function": "gelu_new",

|

||||

"add_cross_attention": true,

|

||||

"architectures": [

|

||||

"GPT2LMHeadModel"

|

||||

],

|

||||

"attn_pdrop": 0.1,

|

||||

"bad_words_ids": null,

|

||||

"bos_token_id": 50256,

|

||||

"chunk_size_feed_forward": 0,

|

||||

"cross_attention_hidden_size": null,

|

||||

"decoder_start_token_id": 50256,

|

||||

"diversity_penalty": 0.0,

|

||||

"do_sample": false,

|

||||

"early_stopping": false,

|

||||

"embd_pdrop": 0.1,

|

||||

"encoder_no_repeat_ngram_size": 0,

|

||||

"eos_token_id": 50256,

|

||||

"finetuning_task": null,

|

||||

"forced_bos_token_id": null,

|

||||

"forced_eos_token_id": null,

|

||||

"id2label": {

|

||||

"0": "LABEL_0",

|

||||

"1": "LABEL_1"

|

||||

},

|

||||

"initializer_range": 0.02,

|

||||

"is_decoder": true,

|

||||

"is_encoder_decoder": false,

|

||||

"label2id": {

|

||||

"LABEL_0": 0,

|

||||

"LABEL_1": 1

|

||||

},

|

||||

"layer_norm_epsilon": 1e-05,

|

||||

"length_penalty": 1.0,

|

||||

"max_length": 20,

|

||||

"min_length": 0,

|

||||

"model_type": "gpt2",

|

||||

"n_ctx": 1024,

|

||||

"n_embd": 768,

|

||||

"n_head": 12,

|

||||

"n_inner": null,

|

||||

"n_layer": 12,

|

||||

"n_positions": 1024,

|

||||

"no_repeat_ngram_size": 0,

|

||||

"num_beam_groups": 1,

|

||||

"num_beams": 1,

|

||||

"num_return_sequences": 1,

|

||||

"output_attentions": false,

|

||||

"output_hidden_states": false,

|

||||

"output_scores": false,

|

||||

"pad_token_id": 50256,

|

||||

"prefix": null,

|

||||

"problem_type": null,

|

||||

"pruned_heads": {},

|

||||

"remove_invalid_values": false,

|

||||

"reorder_and_upcast_attn": false,

|

||||

"repetition_penalty": 1.0,

|

||||

"resid_pdrop": 0.1,

|

||||

"return_dict": true,

|

||||

"return_dict_in_generate": false,

|

||||

"scale_attn_by_inverse_layer_idx": false,

|

||||

"scale_attn_weights": true,

|

||||

"sep_token_id": null,

|

||||

"summary_activation": null,

|

||||

"summary_first_dropout": 0.1,

|

||||

"summary_proj_to_labels": true,

|

||||

"summary_type": "cls_index",

|

||||

"summary_use_proj": true,

|

||||

"task_specific_params": {

|

||||

"text-generation": {

|

||||

"do_sample": true,

|

||||

"max_length": 50

|

||||

}

|

||||

},

|

||||

"temperature": 1.0,

|

||||

"tie_encoder_decoder": false,

|

||||

"tie_word_embeddings": true,

|

||||

"tokenizer_class": null,

|

||||

"top_k": 50,

|

||||

"top_p": 1.0,

|

||||

"torch_dtype": null,

|

||||

"torchscript": false,

|

||||

"transformers_version": "4.15.0",

|

||||

"use_bfloat16": false,

|

||||

"use_cache": true,

|

||||

"vocab_size": 50257

|

||||

},

|

||||

"decoder_start_token_id": 50256,

|

||||

"encoder": {

|

||||

"_name_or_path": "",

|

||||

"add_cross_attention": false,

|

||||

"architectures": [

|

||||

"ViTModel"

|

||||

],

|

||||

"attention_probs_dropout_prob": 0.0,

|

||||

"bad_words_ids": null,

|

||||

"bos_token_id": null,

|

||||

"chunk_size_feed_forward": 0,

|

||||

"cross_attention_hidden_size": null,

|

||||

"decoder_start_token_id": null,

|

||||

"diversity_penalty": 0.0,

|

||||

"do_sample": false,

|

||||

"early_stopping": false,

|

||||

"encoder_no_repeat_ngram_size": 0,

|

||||

"eos_token_id": null,

|

||||

"finetuning_task": null,

|

||||

"forced_bos_token_id": null,

|

||||

"forced_eos_token_id": null,

|

||||

"hidden_act": "gelu",

|

||||

"hidden_dropout_prob": 0.0,

|

||||

"hidden_size": 768,

|

||||

"id2label": {

|

||||

"0": "LABEL_0",

|

||||

"1": "LABEL_1"

|

||||

},

|

||||

"image_size": 224,

|

||||

"initializer_range": 0.02,

|

||||

"intermediate_size": 3072,

|

||||

"is_decoder": false,

|

||||

"is_encoder_decoder": false,

|

||||

"label2id": {

|

||||

"LABEL_0": 0,

|

||||

"LABEL_1": 1

|

||||

},

|

||||

"layer_norm_eps": 1e-12,

|

||||

"length_penalty": 1.0,

|

||||

"max_length": 20,

|

||||

"min_length": 0,

|

||||

"model_type": "vit",

|

||||

"no_repeat_ngram_size": 0,

|

||||

"num_attention_heads": 12,

|

||||

"num_beam_groups": 1,

|

||||

"num_beams": 1,

|

||||

"num_channels": 3,

|

||||

"num_hidden_layers": 12,

|

||||

"num_return_sequences": 1,

|

||||

"output_attentions": false,

|

||||

"output_hidden_states": false,

|

||||

"output_scores": false,

|

||||

"pad_token_id": null,

|

||||

"patch_size": 16,

|

||||

"prefix": null,

|

||||

"problem_type": null,

|

||||

"pruned_heads": {},

|

||||

"qkv_bias": true,

|

||||

"remove_invalid_values": false,

|

||||

"repetition_penalty": 1.0,

|

||||

"return_dict": true,

|

||||

"return_dict_in_generate": false,

|

||||

"sep_token_id": null,

|

||||

"task_specific_params": null,

|

||||

"temperature": 1.0,

|

||||

"tie_encoder_decoder": false,

|

||||

"tie_word_embeddings": true,

|

||||

"tokenizer_class": null,

|

||||

"top_k": 50,

|

||||

"top_p": 1.0,

|

||||

"torch_dtype": null,

|

||||

"torchscript": false,

|

||||

"transformers_version": "4.15.0",

|

||||

"use_bfloat16": false

|

||||

},

|

||||

"eos_token_id": 50256,

|

||||

"is_encoder_decoder": true,

|

||||

"model_type": "vision-encoder-decoder",

|

||||

"pad_token_id": 50256,

|

||||

"tie_word_embeddings": false,

|

||||

"torch_dtype": "float32",

|

||||

"transformers_version": null

|

||||

}

|

||||

File diff suppressed because it is too large

Load Diff

|

|

@ -0,0 +1,17 @@

|

|||

{

|

||||

"do_normalize": true,

|

||||

"do_resize": true,

|

||||

"feature_extractor_type": "ViTFeatureExtractor",

|

||||

"image_mean": [

|

||||

0.5,

|

||||

0.5,

|

||||

0.5

|

||||

],

|

||||

"image_std": [

|

||||

0.5,

|

||||

0.5,

|

||||

0.5

|

||||

],

|

||||

"resample": 2,

|

||||

"size": 224

|

||||

}

|

||||

Binary file not shown.

|

|

@ -0,0 +1 @@

|

|||

{"bos_token": "<|endoftext|>", "eos_token": "<|endoftext|>", "unk_token": "<|endoftext|>", "pad_token": "<|endoftext|>"}

|

||||

File diff suppressed because one or more lines are too long

|

|

@ -0,0 +1 @@

|

|||

{"unk_token": "<|endoftext|>", "bos_token": "<|endoftext|>", "eos_token": "<|endoftext|>", "add_prefix_space": false, "model_max_length": 1024, "special_tokens_map_file": null, "name_or_path": "./models/", "tokenizer_class": "GPT2Tokenizer"}

|

||||

File diff suppressed because one or more lines are too long

Loading…

Reference in New Issue