first commit

This commit is contained in:

parent

aa3d64d0bf

commit

d7dc046b95

|

|

@ -0,0 +1,202 @@

|

|||

|

||||

Apache License

|

||||

Version 2.0, January 2004

|

||||

http://www.apache.org/licenses/

|

||||

|

||||

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

|

||||

|

||||

1. Definitions.

|

||||

|

||||

"License" shall mean the terms and conditions for use, reproduction,

|

||||

and distribution as defined by Sections 1 through 9 of this document.

|

||||

|

||||

"Licensor" shall mean the copyright owner or entity authorized by

|

||||

the copyright owner that is granting the License.

|

||||

|

||||

"Legal Entity" shall mean the union of the acting entity and all

|

||||

other entities that control, are controlled by, or are under common

|

||||

control with that entity. For the purposes of this definition,

|

||||

"control" means (i) the power, direct or indirect, to cause the

|

||||

direction or management of such entity, whether by contract or

|

||||

otherwise, or (ii) ownership of fifty percent (50%) or more of the

|

||||

outstanding shares, or (iii) beneficial ownership of such entity.

|

||||

|

||||

"You" (or "Your") shall mean an individual or Legal Entity

|

||||

exercising permissions granted by this License.

|

||||

|

||||

"Source" form shall mean the preferred form for making modifications,

|

||||

including but not limited to software source code, documentation

|

||||

source, and configuration files.

|

||||

|

||||

"Object" form shall mean any form resulting from mechanical

|

||||

transformation or translation of a Source form, including but

|

||||

not limited to compiled object code, generated documentation,

|

||||

and conversions to other media types.

|

||||

|

||||

"Work" shall mean the work of authorship, whether in Source or

|

||||

Object form, made available under the License, as indicated by a

|

||||

copyright notice that is included in or attached to the work

|

||||

(an example is provided in the Appendix below).

|

||||

|

||||

"Derivative Works" shall mean any work, whether in Source or Object

|

||||

form, that is based on (or derived from) the Work and for which the

|

||||

editorial revisions, annotations, elaborations, or other modifications

|

||||

represent, as a whole, an original work of authorship. For the purposes

|

||||

of this License, Derivative Works shall not include works that remain

|

||||

separable from, or merely link (or bind by name) to the interfaces of,

|

||||

the Work and Derivative Works thereof.

|

||||

|

||||

"Contribution" shall mean any work of authorship, including

|

||||

the original version of the Work and any modifications or additions

|

||||

to that Work or Derivative Works thereof, that is intentionally

|

||||

submitted to Licensor for inclusion in the Work by the copyright owner

|

||||

or by an individual or Legal Entity authorized to submit on behalf of

|

||||

the copyright owner. For the purposes of this definition, "submitted"

|

||||

means any form of electronic, verbal, or written communication sent

|

||||

to the Licensor or its representatives, including but not limited to

|

||||

communication on electronic mailing lists, source code control systems,

|

||||

and issue tracking systems that are managed by, or on behalf of, the

|

||||

Licensor for the purpose of discussing and improving the Work, but

|

||||

excluding communication that is conspicuously marked or otherwise

|

||||

designated in writing by the copyright owner as "Not a Contribution."

|

||||

|

||||

"Contributor" shall mean Licensor and any individual or Legal Entity

|

||||

on behalf of whom a Contribution has been received by Licensor and

|

||||

subsequently incorporated within the Work.

|

||||

|

||||

2. Grant of Copyright License. Subject to the terms and conditions of

|

||||

this License, each Contributor hereby grants to You a perpetual,

|

||||

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

||||

copyright license to reproduce, prepare Derivative Works of,

|

||||

publicly display, publicly perform, sublicense, and distribute the

|

||||

Work and such Derivative Works in Source or Object form.

|

||||

|

||||

3. Grant of Patent License. Subject to the terms and conditions of

|

||||

this License, each Contributor hereby grants to You a perpetual,

|

||||

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

||||

(except as stated in this section) patent license to make, have made,

|

||||

use, offer to sell, sell, import, and otherwise transfer the Work,

|

||||

where such license applies only to those patent claims licensable

|

||||

by such Contributor that are necessarily infringed by their

|

||||

Contribution(s) alone or by combination of their Contribution(s)

|

||||

with the Work to which such Contribution(s) was submitted. If You

|

||||

institute patent litigation against any entity (including a

|

||||

cross-claim or counterclaim in a lawsuit) alleging that the Work

|

||||

or a Contribution incorporated within the Work constitutes direct

|

||||

or contributory patent infringement, then any patent licenses

|

||||

granted to You under this License for that Work shall terminate

|

||||

as of the date such litigation is filed.

|

||||

|

||||

4. Redistribution. You may reproduce and distribute copies of the

|

||||

Work or Derivative Works thereof in any medium, with or without

|

||||

modifications, and in Source or Object form, provided that You

|

||||

meet the following conditions:

|

||||

|

||||

(a) You must give any other recipients of the Work or

|

||||

Derivative Works a copy of this License; and

|

||||

|

||||

(b) You must cause any modified files to carry prominent notices

|

||||

stating that You changed the files; and

|

||||

|

||||

(c) You must retain, in the Source form of any Derivative Works

|

||||

that You distribute, all copyright, patent, trademark, and

|

||||

attribution notices from the Source form of the Work,

|

||||

excluding those notices that do not pertain to any part of

|

||||

the Derivative Works; and

|

||||

|

||||

(d) If the Work includes a "NOTICE" text file as part of its

|

||||

distribution, then any Derivative Works that You distribute must

|

||||

include a readable copy of the attribution notices contained

|

||||

within such NOTICE file, excluding those notices that do not

|

||||

pertain to any part of the Derivative Works, in at least one

|

||||

of the following places: within a NOTICE text file distributed

|

||||

as part of the Derivative Works; within the Source form or

|

||||

documentation, if provided along with the Derivative Works; or,

|

||||

within a display generated by the Derivative Works, if and

|

||||

wherever such third-party notices normally appear. The contents

|

||||

of the NOTICE file are for informational purposes only and

|

||||

do not modify the License. You may add Your own attribution

|

||||

notices within Derivative Works that You distribute, alongside

|

||||

or as an addendum to the NOTICE text from the Work, provided

|

||||

that such additional attribution notices cannot be construed

|

||||

as modifying the License.

|

||||

|

||||

You may add Your own copyright statement to Your modifications and

|

||||

may provide additional or different license terms and conditions

|

||||

for use, reproduction, or distribution of Your modifications, or

|

||||

for any such Derivative Works as a whole, provided Your use,

|

||||

reproduction, and distribution of the Work otherwise complies with

|

||||

the conditions stated in this License.

|

||||

|

||||

5. Submission of Contributions. Unless You explicitly state otherwise,

|

||||

any Contribution intentionally submitted for inclusion in the Work

|

||||

by You to the Licensor shall be under the terms and conditions of

|

||||

this License, without any additional terms or conditions.

|

||||

Notwithstanding the above, nothing herein shall supersede or modify

|

||||

the terms of any separate license agreement you may have executed

|

||||

with Licensor regarding such Contributions.

|

||||

|

||||

6. Trademarks. This License does not grant permission to use the trade

|

||||

names, trademarks, service marks, or product names of the Licensor,

|

||||

except as required for reasonable and customary use in describing the

|

||||

origin of the Work and reproducing the content of the NOTICE file.

|

||||

|

||||

7. Disclaimer of Warranty. Unless required by applicable law or

|

||||

agreed to in writing, Licensor provides the Work (and each

|

||||

Contributor provides its Contributions) on an "AS IS" BASIS,

|

||||

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

|

||||

implied, including, without limitation, any warranties or conditions

|

||||

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

|

||||

PARTICULAR PURPOSE. You are solely responsible for determining the

|

||||

appropriateness of using or redistributing the Work and assume any

|

||||

risks associated with Your exercise of permissions under this License.

|

||||

|

||||

8. Limitation of Liability. In no event and under no legal theory,

|

||||

whether in tort (including negligence), contract, or otherwise,

|

||||

unless required by applicable law (such as deliberate and grossly

|

||||

negligent acts) or agreed to in writing, shall any Contributor be

|

||||

liable to You for damages, including any direct, indirect, special,

|

||||

incidental, or consequential damages of any character arising as a

|

||||

result of this License or out of the use or inability to use the

|

||||

Work (including but not limited to damages for loss of goodwill,

|

||||

work stoppage, computer failure or malfunction, or any and all

|

||||

other commercial damages or losses), even if such Contributor

|

||||

has been advised of the possibility of such damages.

|

||||

|

||||

9. Accepting Warranty or Additional Liability. While redistributing

|

||||

the Work or Derivative Works thereof, You may choose to offer,

|

||||

and charge a fee for, acceptance of support, warranty, indemnity,

|

||||

or other liability obligations and/or rights consistent with this

|

||||

License. However, in accepting such obligations, You may act only

|

||||

on Your own behalf and on Your sole responsibility, not on behalf

|

||||

of any other Contributor, and only if You agree to indemnify,

|

||||

defend, and hold each Contributor harmless for any liability

|

||||

incurred by, or claims asserted against, such Contributor by reason

|

||||

of your accepting any such warranty or additional liability.

|

||||

|

||||

END OF TERMS AND CONDITIONS

|

||||

|

||||

APPENDIX: How to apply the Apache License to your work.

|

||||

|

||||

To apply the Apache License to your work, attach the following

|

||||

boilerplate notice, with the fields enclosed by brackets "[]"

|

||||

replaced with your own identifying information. (Don't include

|

||||

the brackets!) The text should be enclosed in the appropriate

|

||||

comment syntax for the file format. We also recommend that a

|

||||

file or class name and description of purpose be included on the

|

||||

same "printed page" as the copyright notice for easier

|

||||

identification within third-party archives.

|

||||

|

||||

Copyright 2024 Alibaba Cloud

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License");

|

||||

you may not use this file except in compliance with the License.

|

||||

You may obtain a copy of the License at

|

||||

|

||||

http://www.apache.org/licenses/LICENSE-2.0

|

||||

|

||||

Unless required by applicable law or agreed to in writing, software

|

||||

distributed under the License is distributed on an "AS IS" BASIS,

|

||||

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

||||

See the License for the specific language governing permissions and

|

||||

limitations under the License.

|

||||

116

README.md

116

README.md

|

|

@ -1,3 +1,115 @@

|

|||

# Qwen2.5-Math-7B-Instruct_a13571055665541120819061

|

||||

---

|

||||

base_model: Qwen/Qwen2.5-Math-7B

|

||||

language:

|

||||

- en

|

||||

pipeline_tag: text-generation

|

||||

tags:

|

||||

- chat

|

||||

library_name: transformers

|

||||

license: apache-2.0

|

||||

license_link: https://huggingface.co/Qwen/Qwen2.5-Math-7B-Instruct/blob/main/LICENSE

|

||||

---

|

||||

|

||||

Qwen2.5-Math-7B-Instruct

|

||||

|

||||

# Qwen2.5-Math-7B-Instruct

|

||||

|

||||

> [!Warning]

|

||||

> <div align="center">

|

||||

> <b>

|

||||

> 🚨 Qwen2.5-Math mainly supports solving English and Chinese math problems through CoT and TIR. We do not recommend using this series of models for other tasks.

|

||||

> </b>

|

||||

> </div>

|

||||

|

||||

## Introduction

|

||||

|

||||

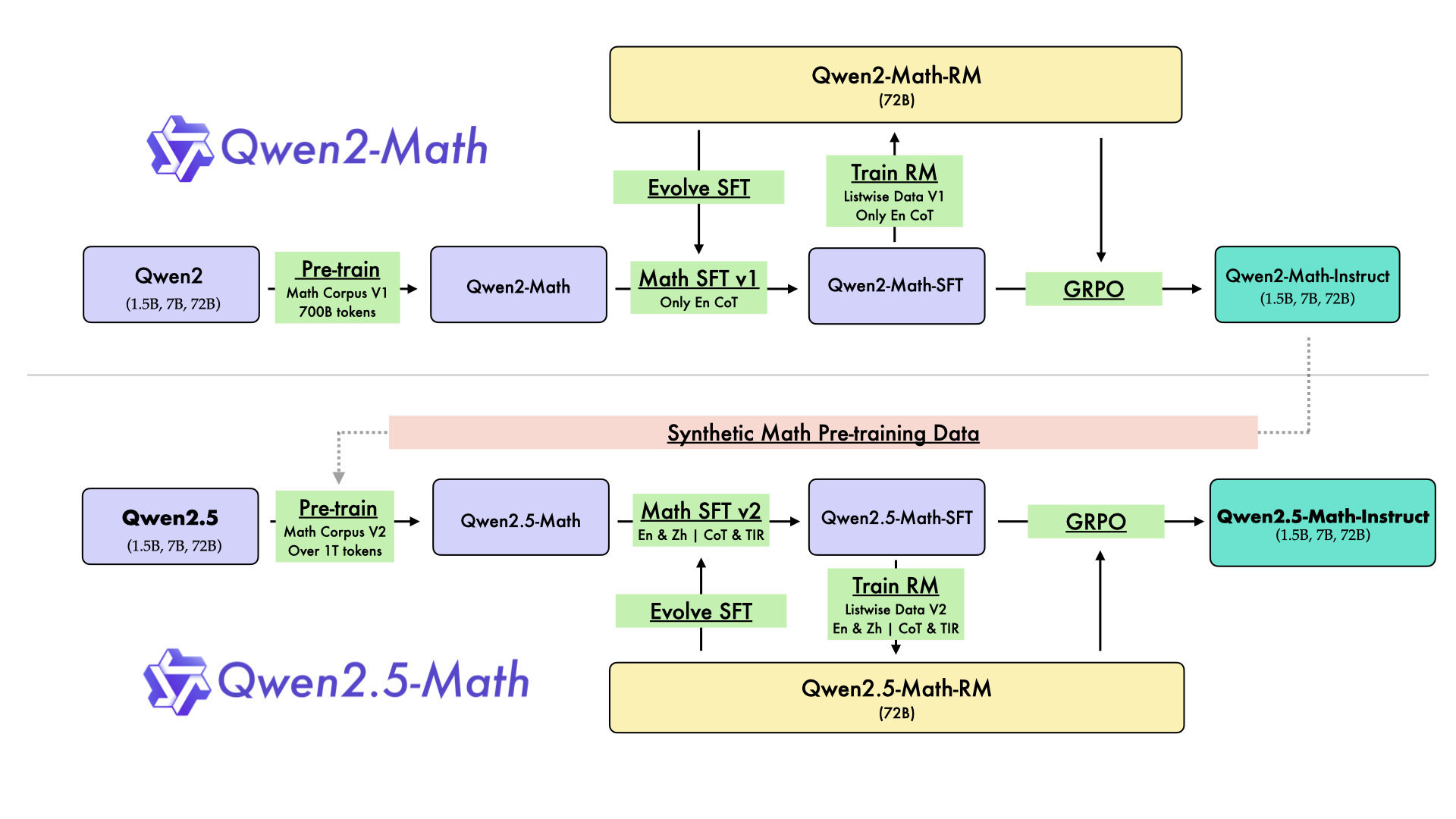

In August 2024, we released the first series of mathematical LLMs - [Qwen2-Math](https://qwenlm.github.io/blog/qwen2-math/) - of our Qwen family. A month later, we have upgraded it and open-sourced **Qwen2.5-Math** series, including base models **Qwen2.5-Math-1.5B/7B/72B**, instruction-tuned models **Qwen2.5-Math-1.5B/7B/72B-Instruct**, and mathematical reward model **Qwen2.5-Math-RM-72B**.

|

||||

|

||||

Unlike Qwen2-Math series which only supports using Chain-of-Thught (CoT) to solve English math problems, Qwen2.5-Math series is expanded to support using both CoT and Tool-integrated Reasoning (TIR) to solve math problems in both Chinese and English. The Qwen2.5-Math series models have achieved significant performance improvements compared to the Qwen2-Math series models on the Chinese and English mathematics benchmarks with CoT.

|

||||

|

||||

|

||||

|

||||

While CoT plays a vital role in enhancing the reasoning capabilities of LLMs, it faces challenges in achieving computational accuracy and handling complex mathematical or algorithmic reasoning tasks, such as finding the roots of a quadratic equation or computing the eigenvalues of a matrix. TIR can further improve the model's proficiency in precise computation, symbolic manipulation, and algorithmic manipulation. Qwen2.5-Math-1.5B/7B/72B-Instruct achieve 79.7, 85.3, and 87.8 respectively on the MATH benchmark using TIR.

|

||||

|

||||

## Model Details

|

||||

|

||||

|

||||

For more details, please refer to our [blog post](https://qwenlm.github.io/blog/qwen2.5-math/) and [GitHub repo](https://github.com/QwenLM/Qwen2.5-Math).

|

||||

|

||||

|

||||

## Requirements

|

||||

* `transformers>=4.37.0` for Qwen2.5-Math models. The latest version is recommended.

|

||||

|

||||

> [!Warning]

|

||||

> <div align="center">

|

||||

> <b>

|

||||

> 🚨 This is a must because <code>transformers</code> integrated Qwen2 codes since <code>4.37.0</code>.

|

||||

> </b>

|

||||

> </div>

|

||||

|

||||

For requirements on GPU memory and the respective throughput, see similar results of Qwen2 [here](https://qwen.readthedocs.io/en/latest/benchmark/speed_benchmark.html).

|

||||

|

||||

## Quick Start

|

||||

|

||||

> [!Important]

|

||||

>

|

||||

> **Qwen2.5-Math-7B-Instruct** is an instruction model for chatting;

|

||||

>

|

||||

> **Qwen2.5-Math-7B** is a base model typically used for completion and few-shot inference, serving as a better starting point for fine-tuning.

|

||||

>

|

||||

|

||||

### 🤗 Hugging Face Transformers

|

||||

|

||||

Qwen2.5-Math can be deployed and infered in the same way as [Qwen2.5](https://github.com/QwenLM/Qwen2.5). Here we show a code snippet to show you how to use the chat model with `transformers`:

|

||||

|

||||

```python

|

||||

from modelscope import AutoModelForCausalLM, AutoTokenizer

|

||||

|

||||

model_name = "qwen/Qwen2.5-Math-7B-Instruct"

|

||||

device = "cuda" # the device to load the model onto

|

||||

|

||||

model = AutoModelForCausalLM.from_pretrained(

|

||||

model_name,

|

||||

torch_dtype="auto",

|

||||

device_map="auto"

|

||||

)

|

||||

tokenizer = AutoTokenizer.from_pretrained(model_name)

|

||||

|

||||

prompt = "Find the value of $x$ that satisfies the equation $4x+5 = 6x+7$."

|

||||

messages = [

|

||||

{"role": "system", "content": "You are Qwen, created by Alibaba Cloud. You are a helpful assistant."},

|

||||

{"role": "user", "content": prompt}

|

||||

]

|

||||

text = tokenizer.apply_chat_template(

|

||||

messages,

|

||||

tokenize=False,

|

||||

add_generation_prompt=True

|

||||

)

|

||||

model_inputs = tokenizer([text], return_tensors="pt").to(device)

|

||||

|

||||

generated_ids = model.generate(

|

||||

**model_inputs,

|

||||

max_new_tokens=512

|

||||

)

|

||||

generated_ids = [

|

||||

output_ids[len(input_ids):] for input_ids, output_ids in zip(model_inputs.input_ids, generated_ids)

|

||||

]

|

||||

|

||||

response = tokenizer.batch_decode(generated_ids, skip_special_tokens=True)[0]

|

||||

```

|

||||

|

||||

### 🤖 ModelScope

|

||||

We strongly advise users especially those in mainland China to use ModelScope. `snapshot_download` can help you solve issues concerning downloading checkpoints.

|

||||

|

||||

|

||||

## Citation

|

||||

|

||||

If you find our work helpful, feel free to give us a citation.

|

||||

|

||||

```

|

||||

@article{yang2024qwen2,

|

||||

title={Qwen2 technical report},

|

||||

author={Yang, An and Yang, Baosong and Hui, Binyuan and Zheng, Bo and Yu, Bowen and Zhou, Chang and Li, Chengpeng and Li, Chengyuan and Liu, Dayiheng and Huang, Fei and others},

|

||||

journal={arXiv preprint arXiv:2407.10671},

|

||||

year={2024}

|

||||

}

|

||||

```

|

||||

|

|

@ -0,0 +1,27 @@

|

|||

{

|

||||

"architectures": [

|

||||

"Qwen2ForCausalLM"

|

||||

],

|

||||

"attention_dropout": 0.0,

|

||||

"bos_token_id": 151643,

|

||||

"eos_token_id": 151645,

|

||||

"hidden_act": "silu",

|

||||

"hidden_size": 3584,

|

||||

"initializer_range": 0.02,

|

||||

"intermediate_size": 18944,

|

||||

"max_position_embeddings": 4096,

|

||||

"max_window_layers": 28,

|

||||

"model_type": "qwen2",

|

||||

"num_attention_heads": 28,

|

||||

"num_hidden_layers": 28,

|

||||

"num_key_value_heads": 4,

|

||||

"rms_norm_eps": 1e-06,

|

||||

"rope_theta": 10000.0,

|

||||

"sliding_window": 4096,

|

||||

"tie_word_embeddings": false,

|

||||

"torch_dtype": "bfloat16",

|

||||

"transformers_version": "4.43.1",

|

||||

"use_cache": true,

|

||||

"use_sliding_window": false,

|

||||

"vocab_size": 152064

|

||||

}

|

||||

|

|

@ -0,0 +1 @@

|

|||

{}

|

||||

|

|

@ -0,0 +1,10 @@

|

|||

{

|

||||

"bos_token_id": 151643,

|

||||

"pad_token_id": 151643,

|

||||

"do_sample": false,

|

||||

"eos_token_id": [

|

||||

151645,

|

||||

151643

|

||||

],

|

||||

"transformers_version": "4.37.0"

|

||||

}

|

||||

File diff suppressed because it is too large

Load Diff

Binary file not shown.

Binary file not shown.

Binary file not shown.

Binary file not shown.

|

|

@ -0,0 +1,346 @@

|

|||

{

|

||||

"metadata": {

|

||||

"total_size": 15231233024

|

||||

},

|

||||

"weight_map": {

|

||||

"lm_head.weight": "model-00004-of-00004.safetensors",

|

||||

"model.embed_tokens.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.0.input_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.0.mlp.down_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.0.mlp.gate_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.0.mlp.up_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.0.post_attention_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.0.self_attn.k_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.0.self_attn.k_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.0.self_attn.o_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.0.self_attn.q_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.0.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.0.self_attn.v_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.0.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.1.input_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.1.mlp.down_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.1.mlp.gate_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.1.mlp.up_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.1.post_attention_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.1.self_attn.k_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.1.self_attn.k_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.1.self_attn.o_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.1.self_attn.q_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.1.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.1.self_attn.v_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.1.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.10.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.10.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.10.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.10.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.10.post_attention_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.10.self_attn.k_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.10.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.10.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.10.self_attn.q_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.10.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.10.self_attn.v_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.10.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.11.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.11.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.11.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.11.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.11.post_attention_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.11.self_attn.k_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.11.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.11.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.11.self_attn.q_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.11.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.11.self_attn.v_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.11.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.12.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.12.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.12.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.12.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.12.post_attention_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.12.self_attn.k_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.12.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.12.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.12.self_attn.q_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.12.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.12.self_attn.v_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.12.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.13.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.13.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.13.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.13.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.13.post_attention_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.13.self_attn.k_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.13.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.13.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.13.self_attn.q_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.13.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.13.self_attn.v_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.13.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.14.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.14.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.14.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.14.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.14.post_attention_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.14.self_attn.k_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.14.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.14.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.14.self_attn.q_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.14.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.14.self_attn.v_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.14.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.15.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.15.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.15.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.15.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.15.post_attention_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.15.self_attn.k_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.15.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.15.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.15.self_attn.q_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.15.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.15.self_attn.v_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.15.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.16.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.16.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.16.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.16.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.16.post_attention_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.16.self_attn.k_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.16.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.16.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.16.self_attn.q_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.16.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.16.self_attn.v_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.16.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.17.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.17.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.17.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.17.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.17.post_attention_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.17.self_attn.k_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.17.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.17.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.17.self_attn.q_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.17.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.17.self_attn.v_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.17.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.18.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.18.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.18.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.18.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.18.post_attention_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.18.self_attn.k_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.18.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.18.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.18.self_attn.q_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.18.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.18.self_attn.v_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.18.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.19.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.19.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.19.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.19.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.19.post_attention_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.19.self_attn.k_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.19.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.19.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.19.self_attn.q_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.19.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.19.self_attn.v_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.19.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.2.input_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.2.mlp.down_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.2.mlp.gate_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.2.mlp.up_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.2.post_attention_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.2.self_attn.k_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.2.self_attn.k_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.2.self_attn.o_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.2.self_attn.q_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.2.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.2.self_attn.v_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.2.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.20.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.20.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.20.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.20.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.20.post_attention_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.20.self_attn.k_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.20.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.20.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.20.self_attn.q_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.20.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.20.self_attn.v_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.20.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.21.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.21.mlp.down_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.21.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.21.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.21.post_attention_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.21.self_attn.k_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.21.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.21.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.21.self_attn.q_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.21.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.21.self_attn.v_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.21.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.22.input_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.22.mlp.down_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.22.mlp.gate_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.22.mlp.up_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.22.post_attention_layernorm.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.22.self_attn.k_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.22.self_attn.k_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.22.self_attn.o_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.22.self_attn.q_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.22.self_attn.q_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.22.self_attn.v_proj.bias": "model-00003-of-00004.safetensors",

|

||||

"model.layers.22.self_attn.v_proj.weight": "model-00003-of-00004.safetensors",

|

||||

"model.layers.23.input_layernorm.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.23.mlp.down_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.23.mlp.gate_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.23.mlp.up_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.23.post_attention_layernorm.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.23.self_attn.k_proj.bias": "model-00004-of-00004.safetensors",

|

||||

"model.layers.23.self_attn.k_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.23.self_attn.o_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.23.self_attn.q_proj.bias": "model-00004-of-00004.safetensors",

|

||||

"model.layers.23.self_attn.q_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.23.self_attn.v_proj.bias": "model-00004-of-00004.safetensors",

|

||||

"model.layers.23.self_attn.v_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.24.input_layernorm.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.24.mlp.down_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.24.mlp.gate_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.24.mlp.up_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.24.post_attention_layernorm.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.24.self_attn.k_proj.bias": "model-00004-of-00004.safetensors",

|

||||

"model.layers.24.self_attn.k_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.24.self_attn.o_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.24.self_attn.q_proj.bias": "model-00004-of-00004.safetensors",

|

||||

"model.layers.24.self_attn.q_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.24.self_attn.v_proj.bias": "model-00004-of-00004.safetensors",

|

||||

"model.layers.24.self_attn.v_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.25.input_layernorm.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.25.mlp.down_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.25.mlp.gate_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.25.mlp.up_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.25.post_attention_layernorm.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.25.self_attn.k_proj.bias": "model-00004-of-00004.safetensors",

|

||||

"model.layers.25.self_attn.k_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.25.self_attn.o_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.25.self_attn.q_proj.bias": "model-00004-of-00004.safetensors",

|

||||

"model.layers.25.self_attn.q_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.25.self_attn.v_proj.bias": "model-00004-of-00004.safetensors",

|

||||

"model.layers.25.self_attn.v_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.26.input_layernorm.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.26.mlp.down_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.26.mlp.gate_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.26.mlp.up_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.26.post_attention_layernorm.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.26.self_attn.k_proj.bias": "model-00004-of-00004.safetensors",

|

||||

"model.layers.26.self_attn.k_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.26.self_attn.o_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.26.self_attn.q_proj.bias": "model-00004-of-00004.safetensors",

|

||||

"model.layers.26.self_attn.q_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.26.self_attn.v_proj.bias": "model-00004-of-00004.safetensors",

|

||||

"model.layers.26.self_attn.v_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.27.input_layernorm.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.27.mlp.down_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.27.mlp.gate_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.27.mlp.up_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.27.post_attention_layernorm.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.27.self_attn.k_proj.bias": "model-00004-of-00004.safetensors",

|

||||

"model.layers.27.self_attn.k_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.27.self_attn.o_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.27.self_attn.q_proj.bias": "model-00004-of-00004.safetensors",

|

||||

"model.layers.27.self_attn.q_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.27.self_attn.v_proj.bias": "model-00004-of-00004.safetensors",

|

||||

"model.layers.27.self_attn.v_proj.weight": "model-00004-of-00004.safetensors",

|

||||

"model.layers.3.input_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.3.mlp.down_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.3.mlp.gate_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.3.mlp.up_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.3.post_attention_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.3.self_attn.k_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.3.self_attn.k_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.3.self_attn.o_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.3.self_attn.q_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.3.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.3.self_attn.v_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.3.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.4.input_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.4.mlp.down_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.4.mlp.gate_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.4.mlp.up_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.4.post_attention_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.4.self_attn.k_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.4.self_attn.k_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.4.self_attn.o_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.4.self_attn.q_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.4.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.4.self_attn.v_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.4.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.5.input_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.5.mlp.down_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.5.mlp.gate_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.5.mlp.up_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.5.post_attention_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.5.self_attn.k_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.5.self_attn.k_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.5.self_attn.o_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.5.self_attn.q_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.5.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.5.self_attn.v_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.5.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.6.input_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.6.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.6.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.6.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.6.post_attention_layernorm.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.6.self_attn.k_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.6.self_attn.k_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.6.self_attn.o_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.6.self_attn.q_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.6.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.6.self_attn.v_proj.bias": "model-00001-of-00004.safetensors",

|

||||

"model.layers.6.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

||||

"model.layers.7.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.7.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.7.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.7.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.7.post_attention_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.7.self_attn.k_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.7.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.7.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.7.self_attn.q_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.7.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.7.self_attn.v_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.7.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.8.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.8.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.8.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.8.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.8.post_attention_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.8.self_attn.k_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.8.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.8.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.8.self_attn.q_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.8.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.8.self_attn.v_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.8.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.9.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.9.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.9.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.9.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.9.post_attention_layernorm.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.9.self_attn.k_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.9.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.9.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.9.self_attn.q_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.9.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.layers.9.self_attn.v_proj.bias": "model-00002-of-00004.safetensors",

|

||||

"model.layers.9.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

||||

"model.norm.weight": "model-00004-of-00004.safetensors"

|

||||

}

|

||||

}

|

||||

File diff suppressed because it is too large

Load Diff

|

|

@ -0,0 +1,207 @@

|

|||

{

|

||||

"add_bos_token": false,

|

||||

"add_prefix_space": false,

|

||||

"added_tokens_decoder": {

|

||||

"151643": {

|

||||

"content": "<|endoftext|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151644": {

|

||||

"content": "<|im_start|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151645": {

|

||||

"content": "<|im_end|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151646": {

|

||||

"content": "<|object_ref_start|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151647": {

|

||||

"content": "<|object_ref_end|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151648": {

|

||||

"content": "<|box_start|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151649": {

|

||||

"content": "<|box_end|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151650": {

|

||||

"content": "<|quad_start|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151651": {

|

||||

"content": "<|quad_end|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151652": {

|

||||

"content": "<|vision_start|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151653": {

|

||||

"content": "<|vision_end|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151654": {

|

||||

"content": "<|vision_pad|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151655": {

|

||||

"content": "<|image_pad|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151656": {

|

||||

"content": "<|video_pad|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": true

|

||||

},

|

||||

"151657": {

|

||||

"content": "<tool_call>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": false

|

||||

},

|

||||

"151658": {

|

||||

"content": "</tool_call>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": false

|

||||

},

|

||||

"151659": {

|

||||

"content": "<|fim_prefix|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": false

|

||||

},

|

||||

"151660": {

|

||||

"content": "<|fim_middle|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": false

|

||||

},

|

||||

"151661": {

|

||||

"content": "<|fim_suffix|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": false

|

||||

},

|

||||

"151662": {

|

||||

"content": "<|fim_pad|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": false

|

||||

},

|

||||

"151663": {

|

||||

"content": "<|repo_name|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": false

|

||||

},

|

||||

"151664": {

|

||||

"content": "<|file_sep|>",

|

||||

"lstrip": false,

|

||||

"normalized": false,

|

||||

"rstrip": false,

|

||||

"single_word": false,

|

||||

"special": false

|

||||

}

|

||||

},

|

||||

"additional_special_tokens": [

|

||||

"<|im_start|>",

|

||||

"<|im_end|>",

|

||||

"<|object_ref_start|>",

|

||||

"<|object_ref_end|>",

|

||||

"<|box_start|>",

|

||||

"<|box_end|>",

|

||||

"<|quad_start|>",

|

||||

"<|quad_end|>",

|

||||

"<|vision_start|>",

|

||||

"<|vision_end|>",

|

||||

"<|vision_pad|>",

|

||||

"<|image_pad|>",

|

||||

"<|video_pad|>"

|

||||

],

|

||||

"bos_token": null,

|

||||

"chat_template": "{%- if tools %}\n {{- '<|im_start|>system\\n' }}\n {%- if messages[0]['role'] == 'system' %}\n {{- messages[0]['content'] }}\n {%- else %}\n {{- 'Please reason step by step, and put your final answer within \\\\boxed{}.' }}\n {%- endif %}\n {{- \"\\n\\n# Tools\\n\\nYou may call one or more functions to assist with the user query.\\n\\nYou are provided with function signatures within <tools></tools> XML tags:\\n<tools>\" }}\n {%- for tool in tools %}\n {{- \"\\n\" }}\n {{- tool | tojson }}\n {%- endfor %}\n {{- \"\\n</tools>\\n\\nFor each function call, return a json object with function name and arguments within <tool_call></tool_call> XML tags:\\n<tool_call>\\n{\\\"name\\\": <function-name>, \\\"arguments\\\": <args-json-object>}\\n</tool_call><|im_end|>\\n\" }}\n{%- else %}\n {%- if messages[0]['role'] == 'system' %}\n {{- '<|im_start|>system\\n' + messages[0]['content'] + '<|im_end|>\\n' }}\n {%- else %}\n {{- '<|im_start|>system\\nPlease reason step by step, and put your final answer within \\\\boxed{}.<|im_end|>\\n' }}\n {%- endif %}\n{%- endif %}\n{%- for message in messages %}\n {%- if (message.role == \"user\") or (message.role == \"system\" and not loop.first) or (message.role == \"assistant\" and not message.tool_calls) %}\n {{- '<|im_start|>' + message.role + '\\n' + message.content + '<|im_end|>' + '\\n' }}\n {%- elif message.role == \"assistant\" %}\n {{- '<|im_start|>' + message.role }}\n {%- if message.content %}\n {{- '\\n' + message.content }}\n {%- endif %}\n {%- for tool_call in message.tool_calls %}\n {%- if tool_call.function is defined %}\n {%- set tool_call = tool_call.function %}\n {%- endif %}\n {{- '\\n<tool_call>\\n{\"name\": \"' }}\n {{- tool_call.name }}\n {{- '\", \"arguments\": ' }}\n {{- tool_call.arguments | tojson }}\n {{- '}\\n</tool_call>' }}\n {%- endfor %}\n {{- '<|im_end|>\\n' }}\n {%- elif message.role == \"tool\" %}\n {%- if (loop.index0 == 0) or (messages[loop.index0 - 1].role != \"tool\") %}\n {{- '<|im_start|>user' }}\n {%- endif %}\n {{- '\\n<tool_response>\\n' }}\n {{- message.content }}\n {{- '\\n</tool_response>' }}\n {%- if loop.last or (messages[loop.index0 + 1].role != \"tool\") %}\n {{- '<|im_end|>\\n' }}\n {%- endif %}\n {%- endif %}\n{%- endfor %}\n{%- if add_generation_prompt %}\n {{- '<|im_start|>assistant\\n' }}\n{%- endif %}\n",

|

||||

"clean_up_tokenization_spaces": false,

|

||||

"eos_token": "<|im_end|>",

|

||||

"errors": "replace",

|

||||

"model_max_length": 131072,

|

||||

"pad_token": "<|endoftext|>",

|

||||

"split_special_tokens": false,

|

||||

"tokenizer_class": "Qwen2Tokenizer",

|

||||

"unk_token": null

|

||||

}

|

||||

File diff suppressed because one or more lines are too long

Loading…

Reference in New Issue